How to Automate Support Deflection Using AI Inside Your Product

Published on

April 7, 2026

Learn how to deflect support tickets with in-product AI , from auditing and structuring your knowledge base to choosing the right in-app touch points, setting smart escalation paths, and measuring what actually moves deflection rate.

The short answer: You automate support deflection by embedding an AI layer , trained on your knowledge base , directly into your product, so users get instant answers before they ever file a ticket.

Introduction

Support tickets are expensive. Industry benchmarks put the cost of a single human-handled ticket at $15–$50, depending on complexity and channel. Multiply that by thousands of tickets a month and the math gets uncomfortable fast.

But here's what most product and CX teams miss: the majority of those tickets are answerable. Users aren't filing tickets because the answer doesn't exist — they're filing tickets because they couldn't find it fast enough. Fix the findability problem and you deflect the ticket before it's ever created.

AI-powered support deflection does exactly that. This guide walks through what it is, how it works, and how to implement it inside your product — not just bolted onto a help center URL most users never visit.

If you're not yet familiar with how AI knowledge bases work under the hood, start with our complete guide to AI knowledge bases before diving in here.

What Is Support Deflection, Really?

Support deflection means resolving a user's question without it ever reaching a human agent. The goal isn't to make support harder to access — it's to answer the question at the moment it's asked, in the place where it's asked.

Traditional deflection relied on static FAQ pages and help center articles. The problem: users had to leave the product, search for the right article, hope it was up to date, and figure out whether it answered their specific question. Most didn't bother.

AI deflection is different because it's:

- Contextual — it understands what the user is trying to do right now

- Conversational — it synthesises an answer rather than returning a list of links

- In-product — it meets users where they already are

- Self-improving — it learns from queries that don't get resolved

Two Approaches: Reactive vs. Proactive Deflection

Before building anything, it helps to know which kind of deflection you're optimising for.

Reactive Deflection

User has a question → searches or asks → gets an AI-generated answer → doesn't need to contact support.

This is the most common pattern: a chat widget, in-app search bar, or contextual help panel powered by your knowledge base. The user initiates; the AI responds.

Proactive Deflection

User reaches a friction point → AI detects it → surfaces relevant help before the user asks.

This is harder to implement but dramatically more effective. Examples include: detecting when a user has been on a billing page for 90 seconds without completing a payment, or surfacing onboarding tips when a user hasn't activated a key feature after 3 sessions.

For most teams starting out, reactive deflection delivers faster ROI. Proactive deflection is the next step once your knowledge layer is solid.

How to Automate Support Deflection in 5 Steps

Step 1: Audit Your Knowledge Base

AI deflection is only as good as the content it draws from. Before connecting any AI layer, audit what you have:

- Which articles have the highest view counts but lowest satisfaction scores? (These need updating)

- Which support ticket categories have no corresponding help content? (These need creating)

- What's the average age of your documentation? Anything over 6 months in a fast-moving product is suspect.

A clean, current knowledge base is the foundation. Skipping this step is the single biggest reason deflection implementations underperform.

Step 2: Choose Your Integration Point

Where users encounter friction determines where you embed the AI layer. Common integration points:

- In-app chat/widget — appears on demand, usually bottom-right corner, covers the whole product

- Contextual sidebars — appears on specific pages (e.g., billing, settings) with page-aware content

- Onboarding flows — embedded in empty states, tooltips, or walkthroughs

- Search overlays — triggered by Cmd+K or a search icon, returns AI-synthesized answers

The closer the AI is to where users encounter the problem, the higher your deflection rate.

Step 3: Connect Your Knowledge Source

Your AI layer needs to be grounded in your actual product documentation — not generic internet knowledge. This is where a purpose-built AI knowledge base tool (rather than a general-purpose LLM) makes the difference.

At minimum, your knowledge source should include:

- Help center / knowledge base articles

- Product changelogs and release notes

- Onboarding documentation

- Troubleshooting guides

- FAQs

The AI retrieves and synthesises from this corpus. If the content isn't there, the AI can't answer — it'll either hallucinate or fall back to "contact support."

Step 4: Set Escalation Paths

Deflection doesn't mean blocking access to humans. The best implementations have a clear escalation path: if the AI can't resolve the query with sufficient confidence, it surfaces a "Contact support" option with context pre-filled.

This pre-filling is underrated. When an agent receives a ticket that already includes what the user searched for, what the AI suggested, and why it didn't resolve — handle time drops significantly.

Step 5: Measure, Tune, Repeat

The metrics that matter for deflection:

- Deflection rate — % of AI interactions that don't result in a ticket

- Resolution rate — % of queries where the user rated the answer positively

- Escalation rate — % that fall through to human support

- Coverage gaps — queries where no good answer was found (your content roadmap)

Review these weekly for the first 90 days. The gap between deflection rate and resolution rate tells you whether users are abandoning the AI without getting their answer (a UX or content problem) or genuinely getting resolved (the goal).

Why Most Deflection Implementations Fail

A few patterns we see repeatedly:

Connecting AI to stale content. If your knowledge base hasn't been updated in months, the AI will confidently deliver outdated answers. Users lose trust fast — and they go straight to your support queue.

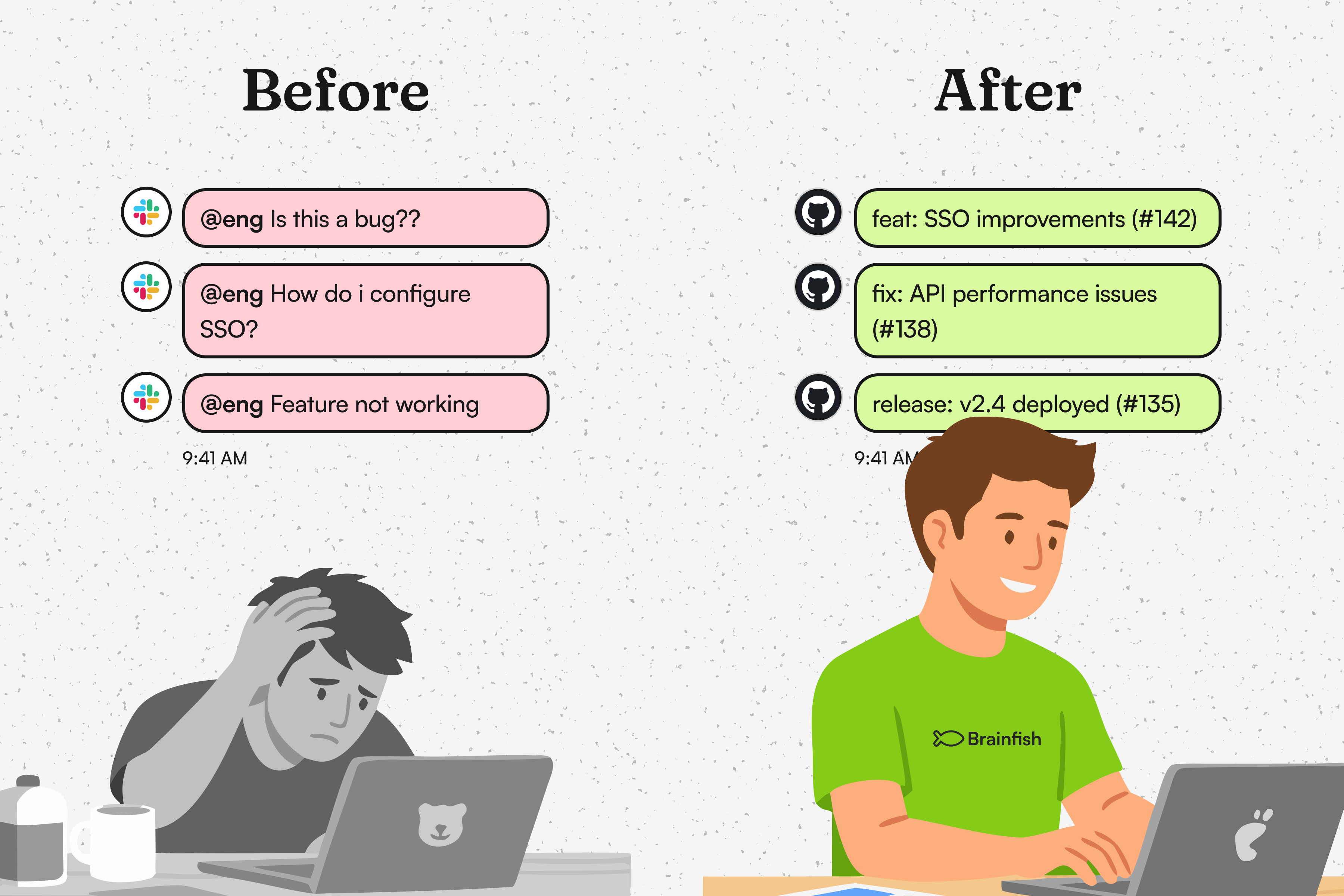

Treating deflection as a support cost project, not a product project. The best deflection implementations have engineering and product involved, not just the support team. If the AI lives outside the product, usage drops off.

No feedback loop. If you're not capturing which queries failed, you have no content roadmap. Failed queries are your highest-signal input for what to write next.

Starting with proactive before nailing reactive. Proactive deflection requires good intent signals, clean user data, and well-tuned triggers. Teams that skip reactive and go straight to proactive end up with intrusive, unhelpful nudges that users dismiss.

What Good Looks Like

A well-implemented deflection system typically achieves:

- 40–60% deflection rate within 90 days of launch (for reactive in-product AI)

- <5 second response time for AI-generated answers

- >70% positive resolution ratings (users confirming the answer helped)

- Escalation to human agents pre-loaded with context, reducing average handle time by 20–30%

These aren't vanity metrics — they map directly to support cost reduction and CSAT improvement.

Getting Started with Brainfish

Brainfish is built specifically for this use case: an AI knowledge layer that sits inside your product, trained on your documentation, and designed to deflect support before it happens.

If you're evaluating AI knowledge base platforms, our complete guide to AI knowledge bases walks through what to look for, what to avoid, and how to get your knowledge base AI-ready.

import time

import requests

from opentelemetry import trace, metrics

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.metrics import MeterProvider

from opentelemetry.sdk.trace.export import ConsoleSpanExporter, SimpleSpanProcessor

from opentelemetry.sdk.metrics.export import ConsoleMetricExporter, PeriodicExportingMetricReader

# --- 1. OpenTelemetry Setup for Observability ---

# Configure exporters to print telemetry data to the console.

# In a production system, these would export to a backend like Prometheus or Jaeger.

trace.set_tracer_provider(TracerProvider())

tracer = trace.get_tracer(__name__)

span_processor = SimpleSpanProcessor(ConsoleSpanExporter())

trace.get_tracer_provider().add_span_processor(span_processor)

metric_reader = PeriodicExportingMetricReader(ConsoleMetricExporter())

metrics.set_meter_provider(MeterProvider(metric_readers=[metric_reader]))

meter = metrics.get_meter(__name__)

# Create custom OpenTelemetry metrics

agent_latency_histogram = meter.create_histogram("agent.latency", unit="ms", description="Agent response time")

agent_invocations_counter = meter.create_counter("agent.invocations", description="Number of times the agent is invoked")

hallucination_rate_gauge = meter.create_gauge("agent.hallucination_rate", unit="percentage", description="Rate of hallucinated responses")

pii_exposure_counter = meter.create_counter("agent.pii_exposure.count", description="Count of responses with PII exposure")

# --- 2. Define the Agent using NeMo Agent Toolkit concepts ---

# The NeMo Agent Toolkit orchestrates agents, tools, and workflows, often via configuration.

# This class simulates an agent that would be managed by the toolkit.

class MultimodalSupportAgent:

def __init__(self, model_endpoint):

self.model_endpoint = model_endpoint

# The toolkit would route incoming requests to this method.

def process_query(self, query, context_data):

# Start an OpenTelemetry span to trace this specific execution.

with tracer.start_as_current_span("agent.process_query") as span:

start_time = time.time()

span.set_attribute("query.text", query)

span.set_attribute("context.data_types", [type(d).__name__ for d in context_data])

# In a real scenario, this would involve complex logic and tool calls.

print(f"\nAgent processing query: '{query}'...")

time.sleep(0.5) # Simulate work (e.g., tool calls, model inference)

agent_response = f"Generated answer for '{query}' based on provided context."

latency = (time.time() - start_time) * 1000

# Record metrics

agent_latency_histogram.record(latency)

agent_invocations_counter.add(1)

span.set_attribute("agent.response", agent_response)

span.set_attribute("agent.latency_ms", latency)

return {"response": agent_response, "latency_ms": latency}

# --- 3. Define the Evaluation Logic using NeMo Evaluator ---

# This function simulates calling the NeMo Evaluator microservice API.

def run_nemo_evaluation(agent_response, ground_truth_data):

with tracer.start_as_current_span("evaluator.run") as span:

print("Submitting response to NeMo Evaluator...")

# In a real system, you would make an HTTP request to the NeMo Evaluator service.

# eval_endpoint = "http://nemo-evaluator-service/v1/evaluate"

# payload = {"response": agent_response, "ground_truth": ground_truth_data}

# response = requests.post(eval_endpoint, json=payload)

# evaluation_results = response.json()

# Mocking the evaluator's response for this example.

time.sleep(0.2) # Simulate network and evaluation latency

mock_results = {

"answer_accuracy": 0.95,

"hallucination_rate": 0.05,

"pii_exposure": False,

"toxicity_score": 0.01,

"latency": 25.5

}

span.set_attribute("eval.results", str(mock_results))

print(f"Evaluation complete: {mock_results}")

return mock_results

# --- 4. The Main Agent Evaluation Loop ---

def agent_evaluation_loop(agent, query, context, ground_truth):

with tracer.start_as_current_span("agent_evaluation_loop") as parent_span:

# Step 1: Agent processes the query

output = agent.process_query(query, context)

# Step 2: Response is evaluated by NeMo Evaluator

eval_metrics = run_nemo_evaluation(output["response"], ground_truth)

# Step 3: Log evaluation results using OpenTelemetry metrics

hallucination_rate_gauge.set(eval_metrics.get("hallucination_rate", 0.0))

if eval_metrics.get("pii_exposure", False):

pii_exposure_counter.add(1)

# Add evaluation metrics as events to the parent span for rich, contextual traces.

parent_span.add_event("EvaluationComplete", attributes=eval_metrics)

# Step 4: (Optional) Trigger retraining or alerts based on metrics

if eval_metrics["answer_accuracy"] < 0.8:

print("[ALERT] Accuracy has dropped below threshold! Triggering retraining workflow.")

parent_span.set_status(trace.Status(trace.StatusCode.ERROR, "Low Accuracy Detected"))

# --- Run the Example ---

if __name__ == "__main__":

support_agent = MultimodalSupportAgent(model_endpoint="http://model-server/invoke")

# Simulate an incoming user request with multimodal context

user_query = "What is the status of my recent order?"

context_documents = ["order_invoice.pdf", "customer_history.csv"]

ground_truth = {"expected_answer": "Your order #1234 has shipped."}

# Execute the loop

agent_evaluation_loop(support_agent, user_query, context_documents, ground_truth)

# In a real application, the metric reader would run in the background.

# We call it explicitly here to see the output.

metric_reader.collect()Frequently Asked Questions

How do we measure ROI on deflection?

Simplest formula: (tickets deflected per month × average cost per ticket) − cost of AI tooling. Most teams are in positive ROI territory within the first quarter.

Can we use our existing Zendesk / Intercom help center?

Yes — most AI knowledge base tools can index your existing help center content. The key is keeping that content current and structured. The AI is only as good as what it's trained on.

What content format works best for AI deflection?

Short, structured articles with clear headings perform better than long prose documents. The AI can retrieve and synthesize discrete sections more accurately than it can parse dense narrative text.

How long does it take to see results?

Most teams see meaningful deflection rates within 4–8 weeks of going live, assuming the knowledge base is in reasonable shape. Full optimization takes 2–3 months of tuning.

Does AI deflection work for complex technical queries?

For highly complex or account-specific issues, AI deflection works best as a first filter: it handles the common queries (often 60–80% of volume) and routes the complex ones to specialists with context already captured.

What's the difference between support deflection and self-service?

Self-service is the broad category — any time a user resolves their own issue. Support deflection specifically refers to preventing a support ticket from being created. All deflection is self-service; not all self-service is deflection.

Recent Posts...

You'll receive the latest insights from the Brainfish blog every other week if you join the Brainfish blog.

.png)