7 things support, CS, and product teams are doing with Brainfish MCP and Claude

Published on

April 28, 2026

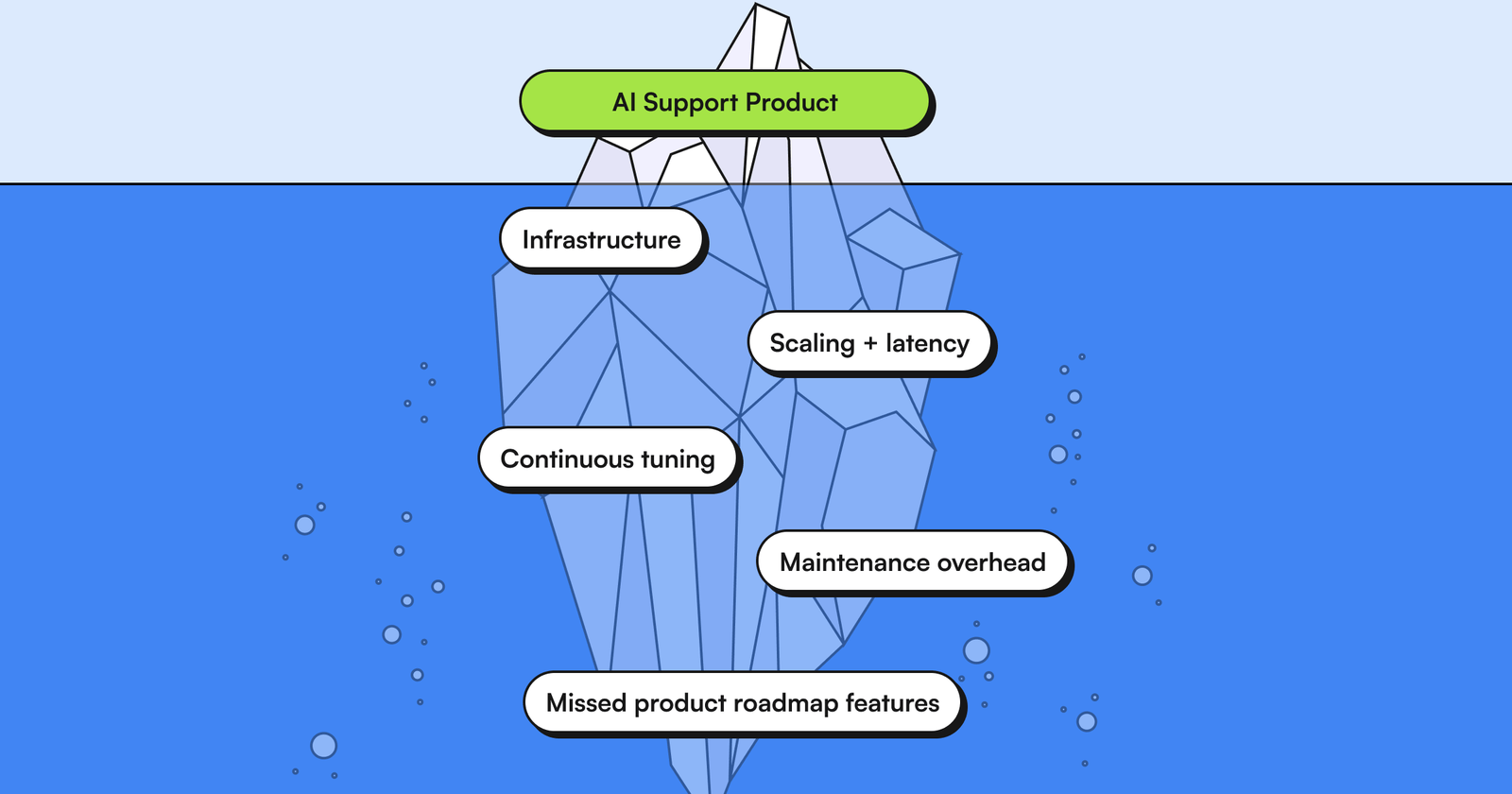

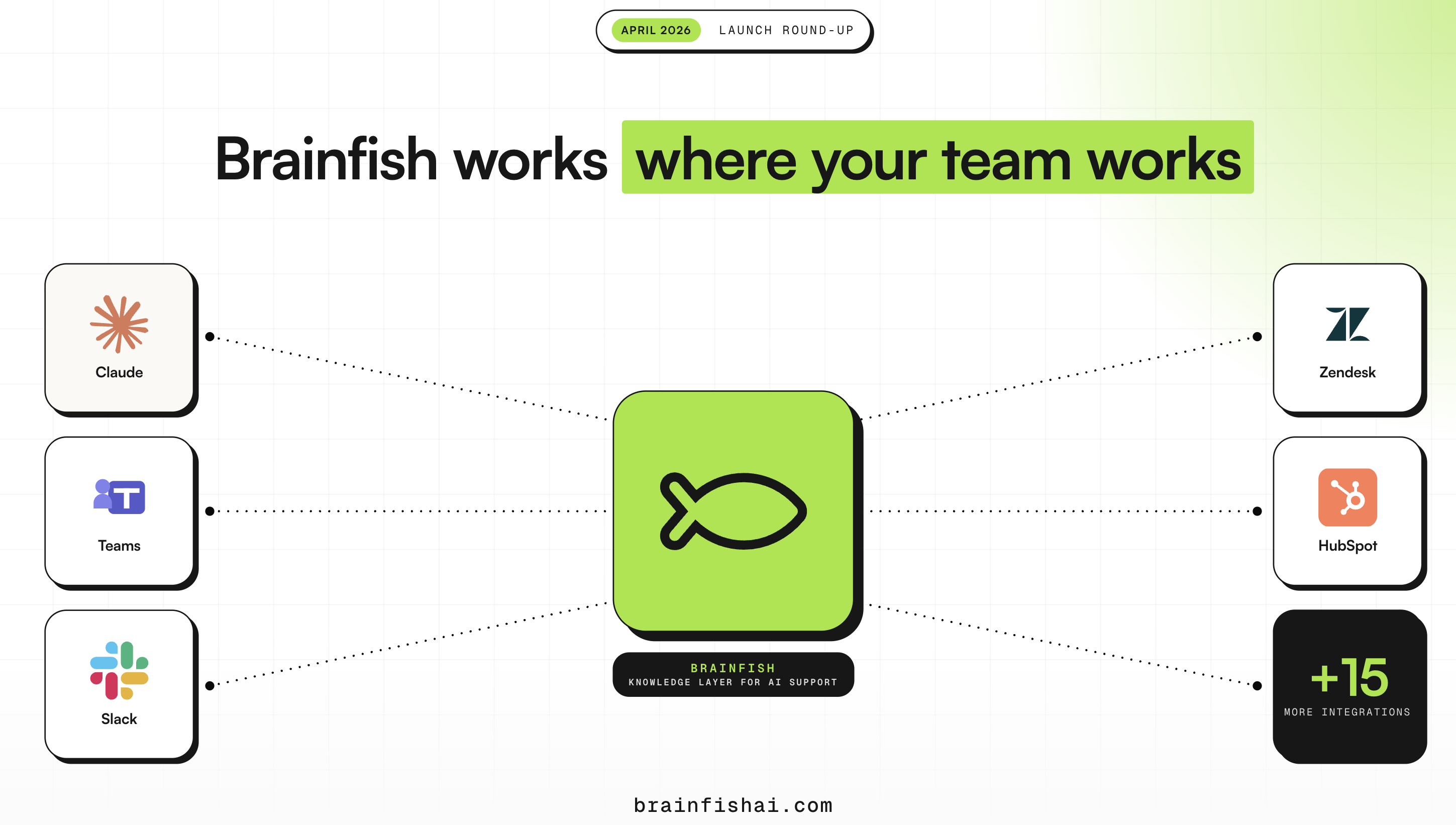

Lately, we get the same message from customers: they've just onboarded their team to Claude, and now they want to know how to pair it with Brainfish. Makes sense. You've invested in a knowledge base and your try to keep your product knowledge as accurate as possible. You've invested in AI agents. The question is how to get everything talking to each other.

That's what the Brainfish MCP server does. It's a Model Context Protocol connector that links Claude directly to your Brainfish knowledge base so you can search it, read from it, draft into it, and audit it without leaving your AI assistant.

We've been watching what teams actually do once they make that connection. Below are four examples from people who've been running it for a while.

GTM Engineer @ Brainfish: Four workflows in one week

Neville is a GTM engineer at Brainfish, and one of his responsibilities is knowledge management. In the week after connecting MCP, he ran four distinct workflows he'd previously been doing by hand or deferring entirely.

Worth noting: none of this is GTM-specific. If keeping your help docs, knowledge base, or product documentation accurate falls anywhere in your job description, whether you're in support, product, engineering, or GTM, these workflows translate directly.

1) Auditing the entire knowledge base without clicking through the platform

The first thing he did was inventory the workspace. Using the "list_collections" and "list_documents" tools via Claude, he pulled every collection and document, spotted duplicates, flagged stale content, and identified articles that needed to be moved or deleted. All in one conversation.

"Without MCP I'd have been clicking through the platform manually," he said. "Now I run the whole thing in one conversation."

A knowledge base review that used to take two weeks took an afternoon.

2) Cleaning up information architecture

After the audit came the cleanup. IA work is the kind of thing that stays on the backlog forever. Pulling every document, checking metadata, understanding structure, deciding what moves where, it all requires too much manual overhead to do regularly. With MCP, Neville pulled the documents into Claude, checked metadata, and batch-flagged articles to move or delete in a single session.

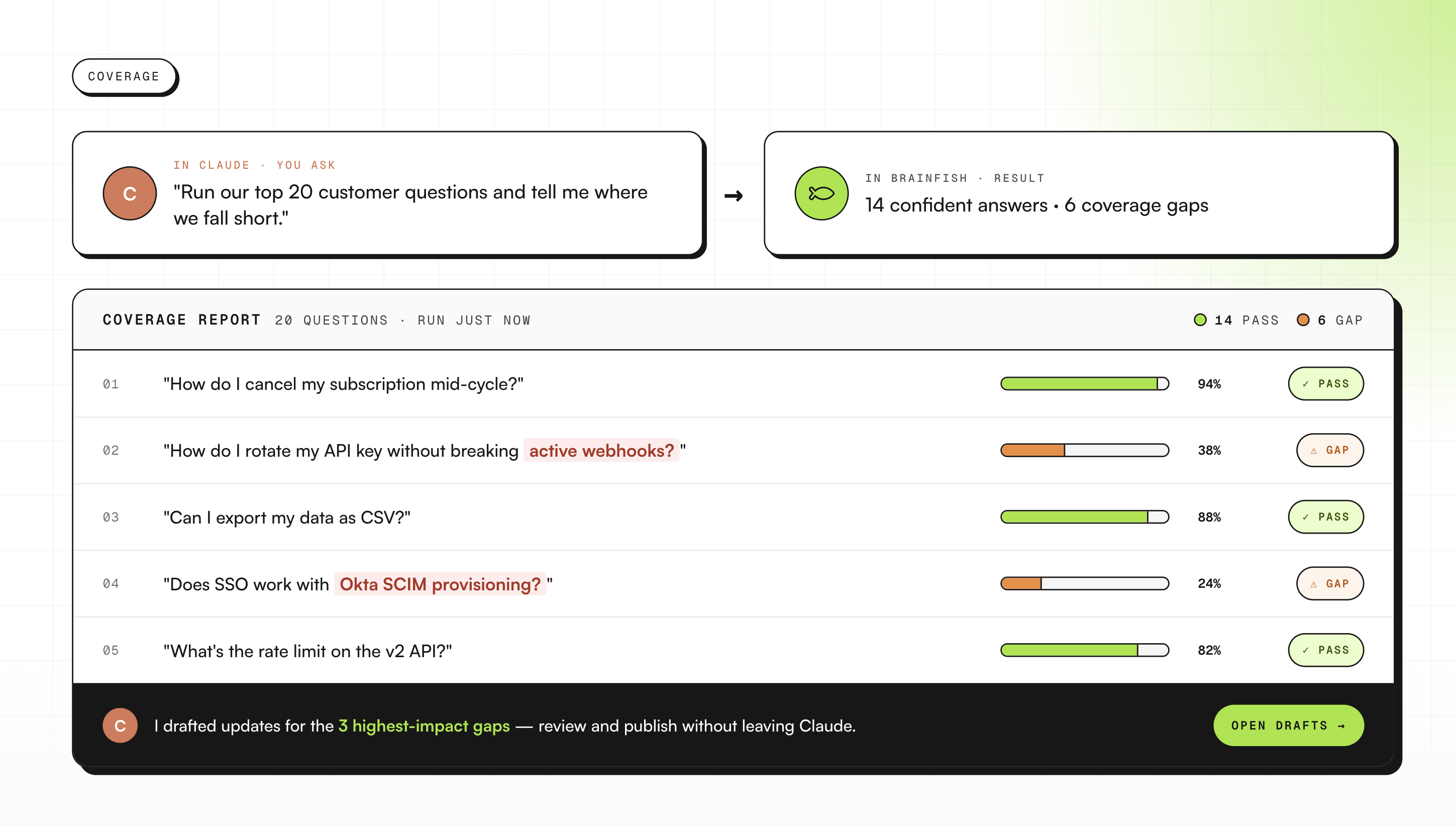

3) Coverage testing: finding gaps before customers do

Neville uses the "brainfish_generate_answer" tool to fire test questions at the knowledge base and check what customers would actually get back. Not what an article says in isolation, but what the AI surfaces when someone asks the question in practice.

For support and knowledge teams, this one has obvious ROI. You find the articles that sound complete but don't hold up under a real question. You find them before a customer does.

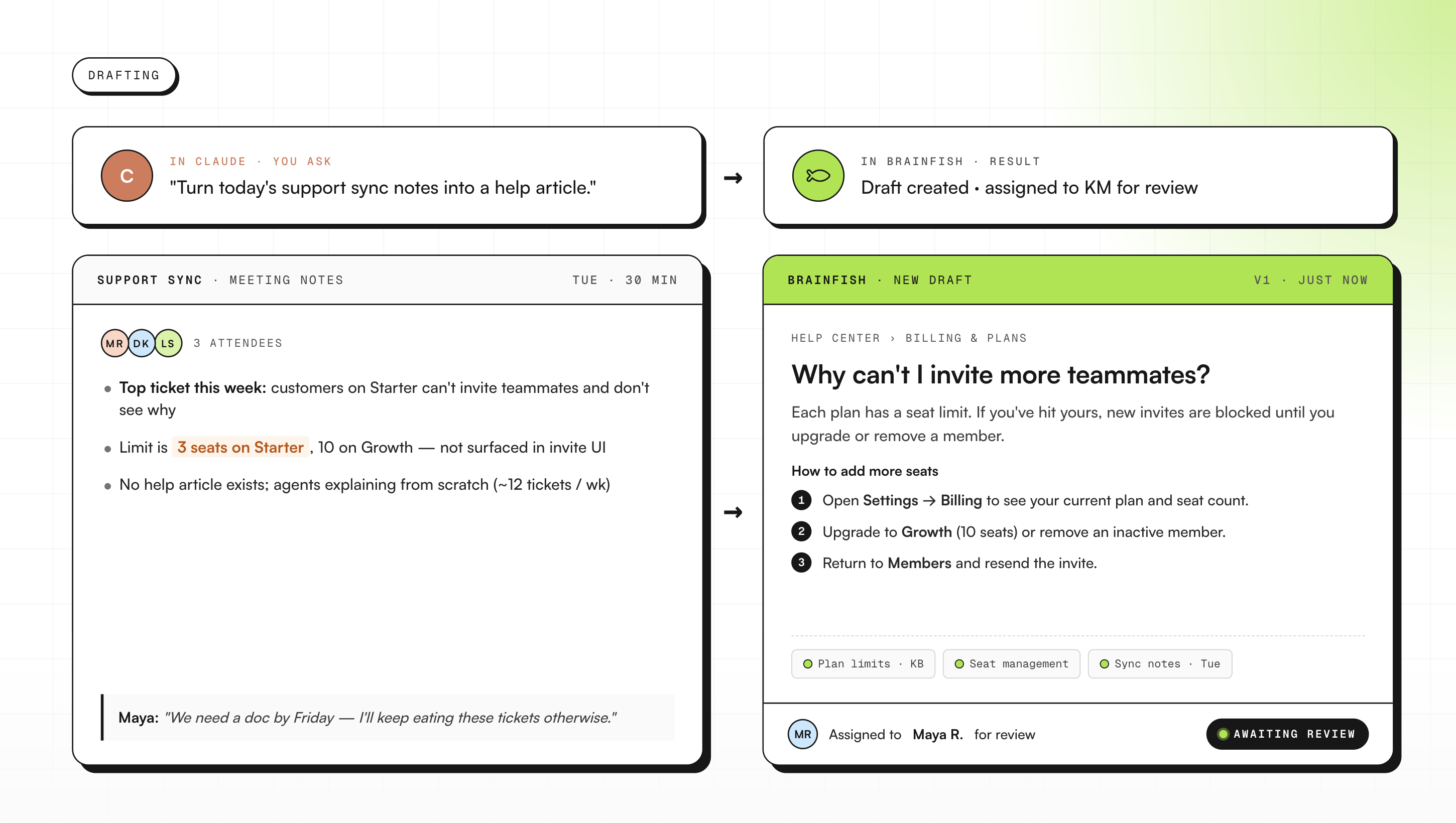

4) Turning meeting notes into published articles

When a product update gets discussed in a meeting, Neville feeds the notes into Claude and asks it to draft a Brainfish article. Claude pushes the draft straight into Brainfish for review.

"No copy-pasting between tools," he said. The article goes from meeting note to draft in review in one conversation.

B2B fintech: Real-time coaching for CS agents

A B2B fintech company's customer success team wanted a way to support agents during live customer conversations without making them tab between three documents. What they built is a "if they say this, you say that" guide grounded live in the Brainfish knowledge base, surfaced inside Claude.

A CS agent can be on a call, type a summary of what the customer just said, and get back a response suggestion built from actual Brainfish content. The specific article, the specific answer, in the moment.

SaaS social platform: Updating the knowledge base without leaving Claude

A SaaS social platform's engineer asked in their shared channel whether Claude could write and update articles via the API. The use case they had in mind was using Claude to draft and update Help Center content without switching tools.

That's what they're doing now. A draft gets written inside Claude, grounded in the existing knowledge base, and pushed into Brainfish for review. The knowledge base stays current without anyone needing to open the platform to do it.

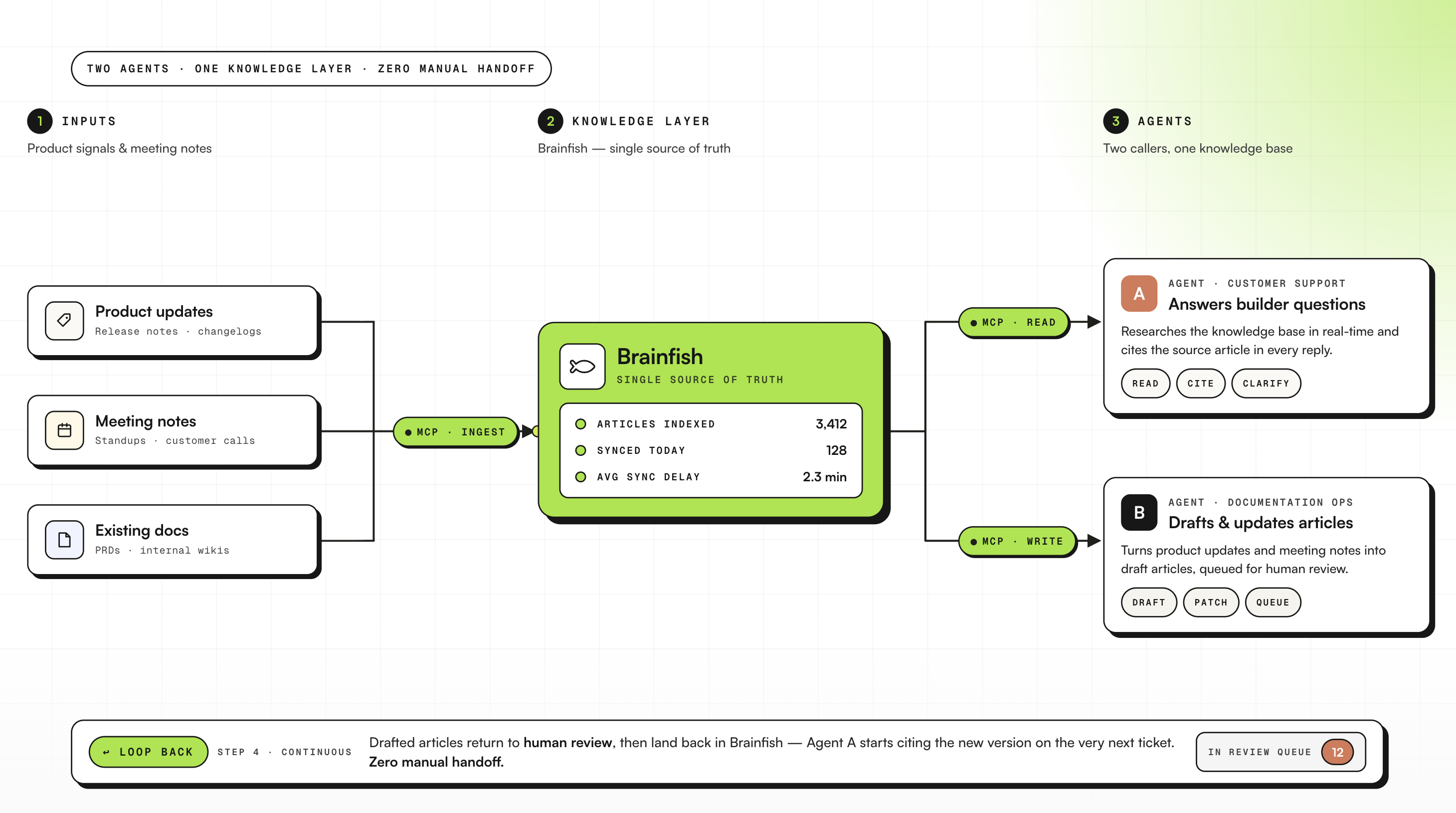

Enterprise AI platform: A documentation workflow built on two agents

An enterprise AI platform uses Brainfish as the knowledge layer for their builder community. Their setup runs two agents: one handles customer-facing support, and a second is dedicated to documentation workflows. Both call Brainfish for research.

Product updates and meeting notes go in as inputs. Brainfish articles come out the other end, directly into review. For a team whose builder community depends on accurate, current documentation, it means the knowledge base keeps pace with the product without a manual handoff in between.

What these teams have in common

None of these teams restructured how they work. Neville ran a full knowledge base audit last week. The B2B fintech company has the CS coaching guide running. The SaaS social platform is drafting and updating articles via Claude. The enterprise AI platform is running the dual-agent architecture in production.

They're doing work that already existed on their plates, just without the overhead of doing it manually.

How to get started

If you're a Brainfish customer who has started using Claude, setup takes under 60 seconds. The full setup guide is here.

If you're not a Brainfish customer yet and you're building your support and CS workflow around Claude, this is where to start.

Want to learn more about Brainfish?

import time

import requests

from opentelemetry import trace, metrics

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.metrics import MeterProvider

from opentelemetry.sdk.trace.export import ConsoleSpanExporter, SimpleSpanProcessor

from opentelemetry.sdk.metrics.export import ConsoleMetricExporter, PeriodicExportingMetricReader

# --- 1. OpenTelemetry Setup for Observability ---

# Configure exporters to print telemetry data to the console.

# In a production system, these would export to a backend like Prometheus or Jaeger.

trace.set_tracer_provider(TracerProvider())

tracer = trace.get_tracer(__name__)

span_processor = SimpleSpanProcessor(ConsoleSpanExporter())

trace.get_tracer_provider().add_span_processor(span_processor)

metric_reader = PeriodicExportingMetricReader(ConsoleMetricExporter())

metrics.set_meter_provider(MeterProvider(metric_readers=[metric_reader]))

meter = metrics.get_meter(__name__)

# Create custom OpenTelemetry metrics

agent_latency_histogram = meter.create_histogram("agent.latency", unit="ms", description="Agent response time")

agent_invocations_counter = meter.create_counter("agent.invocations", description="Number of times the agent is invoked")

hallucination_rate_gauge = meter.create_gauge("agent.hallucination_rate", unit="percentage", description="Rate of hallucinated responses")

pii_exposure_counter = meter.create_counter("agent.pii_exposure.count", description="Count of responses with PII exposure")

# --- 2. Define the Agent using NeMo Agent Toolkit concepts ---

# The NeMo Agent Toolkit orchestrates agents, tools, and workflows, often via configuration.

# This class simulates an agent that would be managed by the toolkit.

class MultimodalSupportAgent:

def __init__(self, model_endpoint):

self.model_endpoint = model_endpoint

# The toolkit would route incoming requests to this method.

def process_query(self, query, context_data):

# Start an OpenTelemetry span to trace this specific execution.

with tracer.start_as_current_span("agent.process_query") as span:

start_time = time.time()

span.set_attribute("query.text", query)

span.set_attribute("context.data_types", [type(d).__name__ for d in context_data])

# In a real scenario, this would involve complex logic and tool calls.

print(f"\nAgent processing query: '{query}'...")

time.sleep(0.5) # Simulate work (e.g., tool calls, model inference)

agent_response = f"Generated answer for '{query}' based on provided context."

latency = (time.time() - start_time) * 1000

# Record metrics

agent_latency_histogram.record(latency)

agent_invocations_counter.add(1)

span.set_attribute("agent.response", agent_response)

span.set_attribute("agent.latency_ms", latency)

return {"response": agent_response, "latency_ms": latency}

# --- 3. Define the Evaluation Logic using NeMo Evaluator ---

# This function simulates calling the NeMo Evaluator microservice API.

def run_nemo_evaluation(agent_response, ground_truth_data):

with tracer.start_as_current_span("evaluator.run") as span:

print("Submitting response to NeMo Evaluator...")

# In a real system, you would make an HTTP request to the NeMo Evaluator service.

# eval_endpoint = "http://nemo-evaluator-service/v1/evaluate"

# payload = {"response": agent_response, "ground_truth": ground_truth_data}

# response = requests.post(eval_endpoint, json=payload)

# evaluation_results = response.json()

# Mocking the evaluator's response for this example.

time.sleep(0.2) # Simulate network and evaluation latency

mock_results = {

"answer_accuracy": 0.95,

"hallucination_rate": 0.05,

"pii_exposure": False,

"toxicity_score": 0.01,

"latency": 25.5

}

span.set_attribute("eval.results", str(mock_results))

print(f"Evaluation complete: {mock_results}")

return mock_results

# --- 4. The Main Agent Evaluation Loop ---

def agent_evaluation_loop(agent, query, context, ground_truth):

with tracer.start_as_current_span("agent_evaluation_loop") as parent_span:

# Step 1: Agent processes the query

output = agent.process_query(query, context)

# Step 2: Response is evaluated by NeMo Evaluator

eval_metrics = run_nemo_evaluation(output["response"], ground_truth)

# Step 3: Log evaluation results using OpenTelemetry metrics

hallucination_rate_gauge.set(eval_metrics.get("hallucination_rate", 0.0))

if eval_metrics.get("pii_exposure", False):

pii_exposure_counter.add(1)

# Add evaluation metrics as events to the parent span for rich, contextual traces.

parent_span.add_event("EvaluationComplete", attributes=eval_metrics)

# Step 4: (Optional) Trigger retraining or alerts based on metrics

if eval_metrics["answer_accuracy"] < 0.8:

print("[ALERT] Accuracy has dropped below threshold! Triggering retraining workflow.")

parent_span.set_status(trace.Status(trace.StatusCode.ERROR, "Low Accuracy Detected"))

# --- Run the Example ---

if __name__ == "__main__":

support_agent = MultimodalSupportAgent(model_endpoint="http://model-server/invoke")

# Simulate an incoming user request with multimodal context

user_query = "What is the status of my recent order?"

context_documents = ["order_invoice.pdf", "customer_history.csv"]

ground_truth = {"expected_answer": "Your order #1234 has shipped."}

# Execute the loop

agent_evaluation_loop(support_agent, user_query, context_documents, ground_truth)

# In a real application, the metric reader would run in the background.

# We call it explicitly here to see the output.

metric_reader.collect()

1) What is Brainfish MCP?

Brainfish MCP is a Model Context Protocol (MCP) server that connects Claude directly to your Brainfish knowledge base. Once connected, Claude can pull in Brainfish content as context, search and read articles, and help draft and update documentation workflows without you switching tools.

2) What can Claude do once Brainfish MCP is connected?

Teams use Claude to do things like:

- Audit a whole knowledge base (list collections/documents, spot duplicates, flag stale content).

- Clean up information architecture (review metadata, decide what to move/delete in batch).

- Run “coverage tests” by asking questions and checking what the AI actually returns to customers.

- Turn meeting notes and product updates into draft Brainfish articles pushed straight into review.

3) Is Brainfish MCP safe for internal or customer data?

It’s designed for teams who need to work with real internal knowledge safely: Claude only has access to whatever your Brainfish connection and permissions allow. In practice, teams use it for customer-facing help content and internal enablement because it keeps answers grounded in the same source of truth your org already maintains.

4) Who typically uses Brainfish MCP (support, CS, product, engineering)?

The common pattern is “anyone responsible for keeping product knowledge accurate.” In this article, that includes:

- Support & knowledge teams (coverage testing and keeping the help center current)

- CS teams (real-time coaching during live conversations)

- Product & engineering (turning updates and meeting notes into docs, maintaining technical/help content)

5) How long does setup take and what do I need?

Setup takes under 60 seconds. You add a custom connector in Claude at claude.ai/settings/integrations, point it at https://mcp.brainfi.sh, and authenticate with your Brainfish API token. After that, you can search, read, draft, and audit Brainfish content directly in Claude.

Frequently Asked Questions

Recent Posts...

You'll receive the latest insights from the Brainfish blog every other week if you join the Brainfish blog.