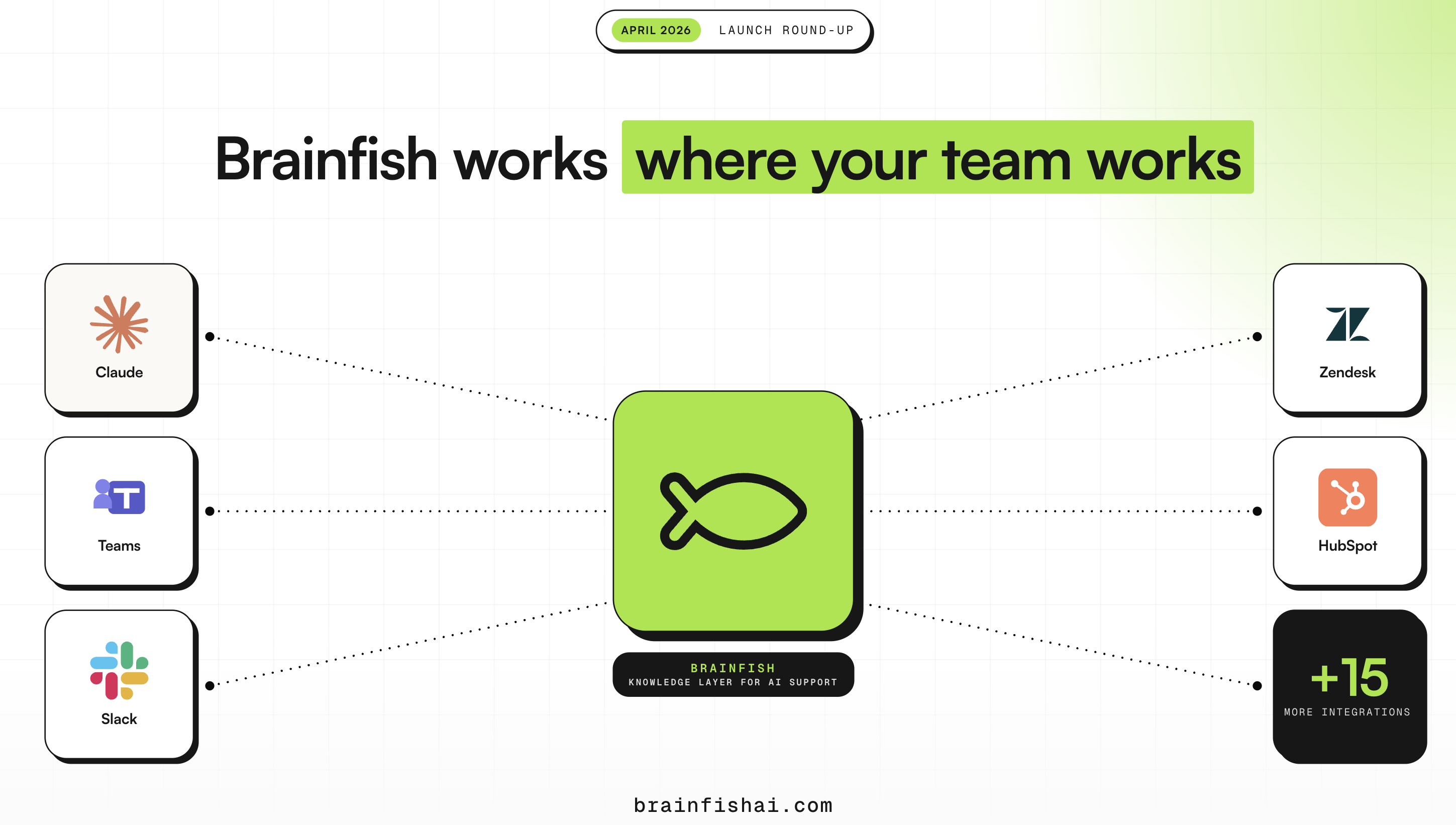

April Launch Round-Up: Brainfish Works Where Your Team Works

Published on

May 5, 2026

Your team spent months building a knowledge base. Decisions about terminology, escalation paths, which article covers which edge case. Your most experienced people, distilled into documentation. And for most of that time, that knowledge lived in one place: a tab in a browser that someone opened when they remembered to, and closed when they were done. The knowledge was good, but the problem was where it lived, and that's the part that changes today!

Brainfish MCP connects Claude, Cursor, and VS Code Copilot directly to your knowledge base: search it, draft into it, audit it, and update it, all inside the same conversation. Claude.ai users connect via OAuth in under 60 seconds.

How Teams are Using the Brainfish MCP

- One SaaS social platform's engineer asked whether Claude could write and update articles via the API. They're doing it now, keeping their help center current with every product update without leaving Claude.

- A B2B fintech company is building a real-time conversation guide for their CS team, grounded live in Brainfish, so agents have the right answer in the moment without tabbing between docs.

- Internally, our team runs knowledge base audits, information architecture cleanup, and coverage testing through MCP without opening the platform once. "Without MCP I'd have been clicking through the platform manually."

Now You Can

- Ask Claude what's missing from your knowledge base and fix it in the same chat.

- Turn a meeting note into a published article without switching tools.

- Audit thousands of articles in a single conversation and find the gaps before customers do.

The knowledge base stops being something you maintain separately on a quarterly schedule and starts being something you operate on from wherever you're already working.

Your Knowledge, Wherever It Lives

But that knowledge probably doesn't all live in Brainfish yet.

Most teams have documentation scattered across Confluence, Google Drive, Notion, Guru, Helpjuice, and a dozen other tools they've accumulated over the years. Brainfish has been syncing from all of it without asking anyone to migrate or rebuild anything. The upstream is already handled. MCP is what makes the downstream actionable: your knowledge, wherever it already is, now reachable from inside Claude.

What makes every answer downstream more accurate is that Brainfish automatically reads your knowledge base to build a structured understanding of your business: your products, your terminology, the specific meaning of words like "refund" or "onboarding" at your company. That context is synced to every agent, so the answers your customers get are grounded in how your business actually works and not what a generic model assumes. And because the quality of everything depends on what's actually in the knowledge base, the review workflow gets better too: the screen that surfaces content suggestions now shows your team the reasoning behind every flag, so approvals take less time and your team trusts what they're acting on.

The Same Answers, Now in Microsoft Teams

The same knowledge your team is now operating on through Claude is also reaching them through Microsoft Teams. Your team mentions @brainfish in a channel, gets a grounded answer in-thread, and the question doesn't become a DM to the one person who happens to know. New joiners find answers without pinging managers. Support teams stay in their queue instead of context-switching. The Teams integration brings that same motion to enterprise organisations that run on Microsoft: same @mention workflow, same cited answers in-thread, same admin controls over which channels it's active in, separate attribution in analytics. If your organisation runs on Microsoft rather than Slack, Brainfish now works the same way inside your channels.

Inside Every Conversation Your Team Is Already Having

When a support agent is mid-ticket, the knowledge they need to answer accurately is often in three different tabs. Brainfish's Internal AI Copilots surface the right article at the right moment inside the conversation the agent is already handling: no search, no tab-switching, the answer finds the agent rather than the other way around. Sales teams use it for demo prep. CS teams use it for live call guidance. The same knowledge base, showing up exactly when someone needs it, without them having to go find it.

For teams operating inside HubSpot or Salesforce, Brainfish AI Actions pull from live CRM data to answer questions without the agent leaving the conversation. The customer's history, their tier, their open tickets: all accessible in context rather than requiring someone to open a second window and look it up separately.

Brainfish also surfaces customer friction automatically through Proactive Nudges, flagging the moments customers got stuck or where answers fell short, summarised for your team without anyone needing to file a report or run a manual audit. The product tells you where the knowledge gaps are before they compound.

What Happens When AI Can't Resolve It

Every team deploying AI support eventually faces the same question from enterprise buyers: what happens when the AI can't resolve something? Most tools answer with a dead end. The customer asks for a human, gets transferred to a queue, and starts the conversation from scratch. Every detail they just shared is gone. Your agent starts blind and resolution takes longer. Zendesk Live Agent Handoff is the direct answer to that question. When a customer needs a human, the transition happens inside the same chat window with the full conversation already in front of your Zendesk agent. No starting over, no repeating, no context lost between the AI response and the human who closes it.

For Developer-Facing Teams

For teams with a developer-facing product, API Docs for Help Center brings the full technical reference into the same destination customers use for support content. Connect your OpenAPI spec, surface an interactive API reference with a live HTTP client for inline testing, and give developers one place to find the right endpoint and the right support article with conversational search across both.

The Same Logic Behind Every Release

Every release here follows the same logic. The knowledge your team has built, working in every tool they already have open: Claude, Teams, Zendesk, Slack, HubSpot, Salesforce, Confluence, Google Drive. Not a new platform to manage or a replacement for anything in the stack. The layer that makes every tool your team is already using smarter about your product.

First answer, right answer. In every tool your team already works in.

Ready to see Brainfish in action? Request a demo here.

import time

import requests

from opentelemetry import trace, metrics

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.metrics import MeterProvider

from opentelemetry.sdk.trace.export import ConsoleSpanExporter, SimpleSpanProcessor

from opentelemetry.sdk.metrics.export import ConsoleMetricExporter, PeriodicExportingMetricReader

# --- 1. OpenTelemetry Setup for Observability ---

# Configure exporters to print telemetry data to the console.

# In a production system, these would export to a backend like Prometheus or Jaeger.

trace.set_tracer_provider(TracerProvider())

tracer = trace.get_tracer(__name__)

span_processor = SimpleSpanProcessor(ConsoleSpanExporter())

trace.get_tracer_provider().add_span_processor(span_processor)

metric_reader = PeriodicExportingMetricReader(ConsoleMetricExporter())

metrics.set_meter_provider(MeterProvider(metric_readers=[metric_reader]))

meter = metrics.get_meter(__name__)

# Create custom OpenTelemetry metrics

agent_latency_histogram = meter.create_histogram("agent.latency", unit="ms", description="Agent response time")

agent_invocations_counter = meter.create_counter("agent.invocations", description="Number of times the agent is invoked")

hallucination_rate_gauge = meter.create_gauge("agent.hallucination_rate", unit="percentage", description="Rate of hallucinated responses")

pii_exposure_counter = meter.create_counter("agent.pii_exposure.count", description="Count of responses with PII exposure")

# --- 2. Define the Agent using NeMo Agent Toolkit concepts ---

# The NeMo Agent Toolkit orchestrates agents, tools, and workflows, often via configuration.

# This class simulates an agent that would be managed by the toolkit.

class MultimodalSupportAgent:

def __init__(self, model_endpoint):

self.model_endpoint = model_endpoint

# The toolkit would route incoming requests to this method.

def process_query(self, query, context_data):

# Start an OpenTelemetry span to trace this specific execution.

with tracer.start_as_current_span("agent.process_query") as span:

start_time = time.time()

span.set_attribute("query.text", query)

span.set_attribute("context.data_types", [type(d).__name__ for d in context_data])

# In a real scenario, this would involve complex logic and tool calls.

print(f"\nAgent processing query: '{query}'...")

time.sleep(0.5) # Simulate work (e.g., tool calls, model inference)

agent_response = f"Generated answer for '{query}' based on provided context."

latency = (time.time() - start_time) * 1000

# Record metrics

agent_latency_histogram.record(latency)

agent_invocations_counter.add(1)

span.set_attribute("agent.response", agent_response)

span.set_attribute("agent.latency_ms", latency)

return {"response": agent_response, "latency_ms": latency}

# --- 3. Define the Evaluation Logic using NeMo Evaluator ---

# This function simulates calling the NeMo Evaluator microservice API.

def run_nemo_evaluation(agent_response, ground_truth_data):

with tracer.start_as_current_span("evaluator.run") as span:

print("Submitting response to NeMo Evaluator...")

# In a real system, you would make an HTTP request to the NeMo Evaluator service.

# eval_endpoint = "http://nemo-evaluator-service/v1/evaluate"

# payload = {"response": agent_response, "ground_truth": ground_truth_data}

# response = requests.post(eval_endpoint, json=payload)

# evaluation_results = response.json()

# Mocking the evaluator's response for this example.

time.sleep(0.2) # Simulate network and evaluation latency

mock_results = {

"answer_accuracy": 0.95,

"hallucination_rate": 0.05,

"pii_exposure": False,

"toxicity_score": 0.01,

"latency": 25.5

}

span.set_attribute("eval.results", str(mock_results))

print(f"Evaluation complete: {mock_results}")

return mock_results

# --- 4. The Main Agent Evaluation Loop ---

def agent_evaluation_loop(agent, query, context, ground_truth):

with tracer.start_as_current_span("agent_evaluation_loop") as parent_span:

# Step 1: Agent processes the query

output = agent.process_query(query, context)

# Step 2: Response is evaluated by NeMo Evaluator

eval_metrics = run_nemo_evaluation(output["response"], ground_truth)

# Step 3: Log evaluation results using OpenTelemetry metrics

hallucination_rate_gauge.set(eval_metrics.get("hallucination_rate", 0.0))

if eval_metrics.get("pii_exposure", False):

pii_exposure_counter.add(1)

# Add evaluation metrics as events to the parent span for rich, contextual traces.

parent_span.add_event("EvaluationComplete", attributes=eval_metrics)

# Step 4: (Optional) Trigger retraining or alerts based on metrics

if eval_metrics["answer_accuracy"] < 0.8:

print("[ALERT] Accuracy has dropped below threshold! Triggering retraining workflow.")

parent_span.set_status(trace.Status(trace.StatusCode.ERROR, "Low Accuracy Detected"))

# --- Run the Example ---

if __name__ == "__main__":

support_agent = MultimodalSupportAgent(model_endpoint="http://model-server/invoke")

# Simulate an incoming user request with multimodal context

user_query = "What is the status of my recent order?"

context_documents = ["order_invoice.pdf", "customer_history.csv"]

ground_truth = {"expected_answer": "Your order #1234 has shipped."}

# Execute the loop

agent_evaluation_loop(support_agent, user_query, context_documents, ground_truth)

# In a real application, the metric reader would run in the background.

# We call it explicitly here to see the output.

metric_reader.collect()What is Brainfish MCP and what does it do?

Brainfish MCP is a Model Context Protocol server that connects Claude, Cursor, and VS Code Copilot directly to your Brainfish knowledge base. From inside your AI assistant, you can search articles, draft new content, audit for gaps and stale content, and push updates back to Brainfish — all without leaving the conversation. Claude.ai users connect via OAuth in under 60 seconds.

How do I use Claude to update my knowledge base?

With Brainfish MCP connected, you can ask Claude what's missing from your knowledge base and fix it in the same chat. You can turn a meeting note into a published article, audit thousands of articles in a single conversation, or identify coverage gaps before customers do — all from inside Claude without switching tools.

Does Brainfish work inside Microsoft Teams?

Yes. @mention Brainfish in any configured Teams channel and get a grounded, cited answer in-thread, right where the question was asked. It uses the same @mention workflow as the Brainfish Slack integration, with admin-controlled channel scoping and separate attribution in analytics.

What happens when Brainfish AI can't resolve a customer support question?

With Zendesk Live Agent Handoff, the customer is transferred to a live Zendesk agent inside the same chat window. The full AI conversation transcript transfers automatically, so the agent starts mid-thread with full context rather than from zero. Customers never have to repeat themselves and agents never start blind.

What knowledge sources does Brainfish sync from?

Brainfish syncs from Confluence, Google Drive, Notion, Guru, Helpjuice, OpenAPI specs, and your website, among others. It doesn't require teams to migrate or rebuild anything — it reads from wherever the knowledge already lives.

What is Brainfish's Internal AI Copilot?

Internal AI Copilots surface the right knowledge base article at the right moment inside the conversation a support agent or sales rep is already handling. No searching, no tab-switching — the answer finds the agent rather than the other way around. CS teams use it for live support. Sales teams use it for demo prep.

Does Brainfish integrate with HubSpot or Salesforce?

Yes. Brainfish AI Actions pull from live CRM data to answer questions without the agent leaving the conversation. The customer's history, tier, and open tickets are all accessible in context without opening a second window.

What is API Docs for Help Center?

API Docs for Help Center connects your OpenAPI spec to Brainfish, surfacing a full interactive API reference with operation detail views and a live HTTP client alongside your support content. Developers get one destination for both the technical reference and support articles, with conversational search across both.

Frequently Asked Questions

Recent Posts...

You'll receive the latest insights from the Brainfish blog every other week if you join the Brainfish blog.