Retrieval Observability: Seeing the Full Chain Behind Every AI Answer

Published on

March 19, 2026

TL;DR: Retrieval observability means seeing the entire chain of how an AI system retrieved and ranked information before generating an answer not just the final output. Without it, debugging a wrong AI answer is like debugging a SQL query by only looking at the UI result. The solution: trace every retrieval decision, make answers deterministically reproducible, and expose confidence scores so you're asking the right question, what did the model retrieve? not just what did it say?

The Debug Session Nobody Wants

A customer reports that your AI assistant gave them information about a feature that was deprecated three versions ago. The output looks confident. Reasonable. Wrong.

What happened?

You pull up the chat logs. You look at the answer. It reads fine. You ask the model the same question again. This time it answers correctly — pulling from the current version. You run it a third time. Back to the old answer.

This is the moment every engineer working on RAG systems hits a wall. The visibility stops at the output. What got retrieved? What documents were ranked highest? Why was that old version considered more relevant than the current one? None of these answers are visible. The answer is wrong, but the path to wrongness is invisible.

This is the core problem that retrieval observability solves.

"I can see what the AI outputs. I can't see what it retrieved. That's the problem."

And the follow-up is worse:

"Same question. Different answer on replay. That's not an accuracy problem — that's a non-determinism problem."

Without visibility into the retrieval chain, debugging becomes guesswork. Is the model hallucinating? Is the retrieval system broken? Is the knowledge base stale? Or is the query being rewritten in unpredictable ways? Without a trace, you're blind.

What Is Retrieval Observability?

Retrieval observability means seeing the complete chain of decisions made during information retrieval — before the language model ever generates a response.

Specifically: visibility into what query variations were tried, which documents or knowledge nodes were ranked highest, why they were ranked that way, and what confidence the retrieval system assigned to each choice.

In traditional database debugging, you don't just see the output of a query — you see the query plan, the indexes hit, the execution time, the rows scanned. That's why database engineers can diagnose problems efficiently. The information retrieval layer of an AI system deserves the same level of inspection.

Without retrieval observability, the system is a black box: query goes in, answer comes out. You have no visibility into the middle — and that's where most AI errors live.

Why Output Monitoring Isn't Enough

Consider the standard approach to AI quality: test the final answer. Is it correct? Does it match expected output? Does it sound coherent?

This is observability of the last step only. It's equivalent to debugging a complex SQL query by only reading the UI result set. You can see that the result is wrong, but without seeing the query that ran, the indexes used, or the filter conditions, you can't fix it.

Most output-only monitoring tells you that something failed. Retrieval observability tells you where it failed.

Consider this failure scenario:

An AI system is supposed to answer questions about API documentation. The latest version is in the knowledge base. A user asks: "Does your API support OAuth 2.0?"

The system answers: "No, we only support API key authentication." This is wrong.

Output monitoring shows: Answer is factually incorrect.

Retrieval observability shows: The retrieval system ranked a document from version 2.1 (which predates OAuth support) higher than the current version 3.2. The confidence score was 0.92, which is why the model trusted it.

These are completely different diagnoses requiring completely different fixes:

- Output monitoring: "The answer is wrong. Ask the model to be more careful."

- Retrieval observability: "The ranking function is prioritizing older documents. Adjust recency weighting or implement version-aware chunking."

Without the trace, the former is all you get.

The Non-Determinism Problem

Ask an RAG system the same question twice. Get a different answer.

This happens more often than most engineers realize. Same query, same knowledge base, different results. The model might be drawing from different retrieved documents. The retrieval system might be returning results in a different order due to vector similarity tie-breaking. The prompt might have been rewritten differently on the second run.

Non-determinism makes debugging impossible. If a user reports a wrong answer and the team tries to reproduce it, they might get the correct answer on the first retry. Is it fixed? No. Run it again. Wrong answer reappears.

This is a nightmare for production systems. Compliance requires audit trails. Customers require consistency. Support tickets require reproducibility.

The reasons most RAG systems are non-deterministic:

- Floating-point similarity scores don't sort identically across runs if scores are close

- Lazy query rewriting that varies based on model temperature or sampling

- Uncontrolled retrieval ranking that treats tied relevance scores as equivalent

Retrieval observability solves this by making every step explicit and reproducible. If the system can show the reasoning trace for a given query, it can be replayed. Same question, same trace, same answer, every time.

This is deterministic replay — and it's essential for enterprise AI.

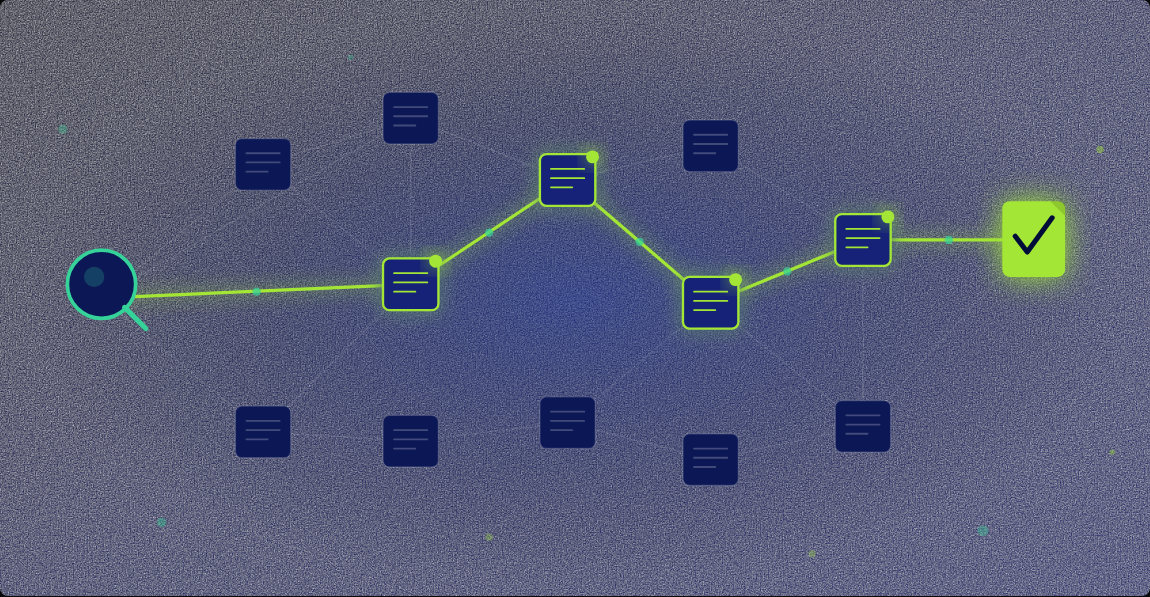

The 4-Phase Reasoning Trace

A complete retrieval trace captures four critical phases:

Phase 1: Query Rewrite

The original user query often isn't the best question to ask the knowledge base. "does the api support webhooks" gets rewritten to "API webhook integration support." The trace should show the original query, the rewritten query, and the reason for the rewrite.

If answers are inconsistent, sometimes the issue is inconsistent query rewriting.

Phase 2: Sub-Queries

Complex questions often need multiple retrieval passes. "What does your API support and what are the limits?" spawns two sub-queries: "API supported features" and "API rate limits and quotas."

The trace should show which sub-queries were generated and why. If a sub-query is missing, that's a knowledge gap. If it returns no results, that's a coverage problem.

Phase 3: Node Selection + Reason

For each sub-query, which knowledge nodes were retrieved and why?

If the wrong node is ranked first, this is where you spot it.

Phase 4: Confidence Score

After all nodes are retrieved and ranked, the system should assign a confidence score: how confident is this retrieval result going to produce a correct answer?

Without this score, there's no way to distinguish retrieval failures from model failures.

Deterministic Replay: Auditing AI Answers

Suppose a compliance officer asks: "Show me how your AI system arrived at this customer answer."

With retrieval observability and deterministic replay, the answer is: "Run this exact query. Here's the trace. Here are the retrieved documents. Here's the reasoning chain. Run it. Same answer. Every time."

This is auditable AI.

Non-deterministic systems can't do this. They can show what happened but can't prove it would happen again. That's unacceptable for regulated industries.

Deterministic replay requires: seeding random number generators, freezing query rewriting logic, locking retrieval ranking, and recording the full reasoning trace.

The payoff: a complete audit trail. Same query, same trace, same answer, same documents retrieved, same confidence score.

What Good Retrieval Traces Reveal

Scenario 1: Stale document retrieved

The trace shows: the older doc scored 0.89, the current one scored 0.84. Recency weighting isn't implemented. Fix: add version-aware retrieval.

Scenario 2: Wrong section of the right document

The trace shows: the right document was retrieved, but the wrong chunk was ranked first. Fix: improve chunk boundaries or add section-level metadata.

Scenario 3: Low confidence + hallucination

The trace shows: confidence 0.31, minimal match, no direct answer found. The model answered anyway. Fix: add confidence thresholds — if confidence < 0.5, return "I don't know" instead of letting the model guess.

Scenario 4: Conflicting knowledge nodes

The trace shows: on run 1, doc A (score 0.92) was retrieved first. On run 2, doc B (score 0.91) was retrieved first. The docs contradict each other and the retrieval order is determined by a float tie-break. Fix: break ties deterministically and flag conflicting sources.

Each of these scenarios is invisible without a retrieval trace. Output monitoring shows only that something went wrong. The trace shows what went wrong and where.

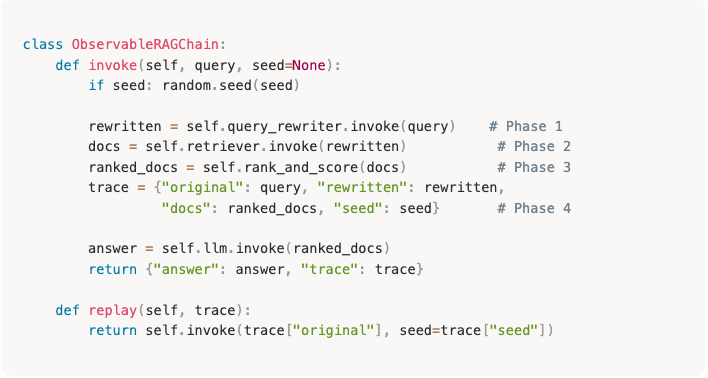

Adding Retrieval Observability to a LangChain Pipeline

Most LangChain RAG pipelines look like this:

query → retriever.invoke() → documents → llm.invoke() → answer

What's missing: visibility into what retriever.invoke() actually returned and why.

To add retrieval observability:

- Wrap the retriever with logging — capture retrieved documents, relevance scores, and metadata.

- Make query rewriting explicit — log both the original and rewritten queries with the rewrite reason.

- Add a ranking step — assign confidence scores and capture why documents were ranked in a given order.

- Record the trace — serialize the full retrieval trace (phases 1–4) before passing documents to the LLM.

- Implement deterministic seeding — set random seeds at the start of each trace so decisions are reproducible.

The trace object is what enables debugging and replay. For a production-ready implementation with full retrieval tracing, see how Brainfish's knowledge layer provides built-in observability →

Further Reading

- What We Learned from Analyzing 1M Support Interactions — Real-world retrieval patterns from 1 million support interactions, including what causes wrong answers

- Answering the Tough Questions About Brainfish — How Brainfish approaches retrieval tracing and observability under the hood

- Compliance-Grade AI: How High-Governance Teams Pilot Without Risk — Why deterministic replay and audit trails matter in regulated industries

- Why Brainfish — The case for AI-native knowledge infrastructure with full retrieval observability built in

import time

import requests

from opentelemetry import trace, metrics

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.metrics import MeterProvider

from opentelemetry.sdk.trace.export import ConsoleSpanExporter, SimpleSpanProcessor

from opentelemetry.sdk.metrics.export import ConsoleMetricExporter, PeriodicExportingMetricReader

# --- 1. OpenTelemetry Setup for Observability ---

# Configure exporters to print telemetry data to the console.

# In a production system, these would export to a backend like Prometheus or Jaeger.

trace.set_tracer_provider(TracerProvider())

tracer = trace.get_tracer(__name__)

span_processor = SimpleSpanProcessor(ConsoleSpanExporter())

trace.get_tracer_provider().add_span_processor(span_processor)

metric_reader = PeriodicExportingMetricReader(ConsoleMetricExporter())

metrics.set_meter_provider(MeterProvider(metric_readers=[metric_reader]))

meter = metrics.get_meter(__name__)

# Create custom OpenTelemetry metrics

agent_latency_histogram = meter.create_histogram("agent.latency", unit="ms", description="Agent response time")

agent_invocations_counter = meter.create_counter("agent.invocations", description="Number of times the agent is invoked")

hallucination_rate_gauge = meter.create_gauge("agent.hallucination_rate", unit="percentage", description="Rate of hallucinated responses")

pii_exposure_counter = meter.create_counter("agent.pii_exposure.count", description="Count of responses with PII exposure")

# --- 2. Define the Agent using NeMo Agent Toolkit concepts ---

# The NeMo Agent Toolkit orchestrates agents, tools, and workflows, often via configuration.

# This class simulates an agent that would be managed by the toolkit.

class MultimodalSupportAgent:

def __init__(self, model_endpoint):

self.model_endpoint = model_endpoint

# The toolkit would route incoming requests to this method.

def process_query(self, query, context_data):

# Start an OpenTelemetry span to trace this specific execution.

with tracer.start_as_current_span("agent.process_query") as span:

start_time = time.time()

span.set_attribute("query.text", query)

span.set_attribute("context.data_types", [type(d).__name__ for d in context_data])

# In a real scenario, this would involve complex logic and tool calls.

print(f"\nAgent processing query: '{query}'...")

time.sleep(0.5) # Simulate work (e.g., tool calls, model inference)

agent_response = f"Generated answer for '{query}' based on provided context."

latency = (time.time() - start_time) * 1000

# Record metrics

agent_latency_histogram.record(latency)

agent_invocations_counter.add(1)

span.set_attribute("agent.response", agent_response)

span.set_attribute("agent.latency_ms", latency)

return {"response": agent_response, "latency_ms": latency}

# --- 3. Define the Evaluation Logic using NeMo Evaluator ---

# This function simulates calling the NeMo Evaluator microservice API.

def run_nemo_evaluation(agent_response, ground_truth_data):

with tracer.start_as_current_span("evaluator.run") as span:

print("Submitting response to NeMo Evaluator...")

# In a real system, you would make an HTTP request to the NeMo Evaluator service.

# eval_endpoint = "http://nemo-evaluator-service/v1/evaluate"

# payload = {"response": agent_response, "ground_truth": ground_truth_data}

# response = requests.post(eval_endpoint, json=payload)

# evaluation_results = response.json()

# Mocking the evaluator's response for this example.

time.sleep(0.2) # Simulate network and evaluation latency

mock_results = {

"answer_accuracy": 0.95,

"hallucination_rate": 0.05,

"pii_exposure": False,

"toxicity_score": 0.01,

"latency": 25.5

}

span.set_attribute("eval.results", str(mock_results))

print(f"Evaluation complete: {mock_results}")

return mock_results

# --- 4. The Main Agent Evaluation Loop ---

def agent_evaluation_loop(agent, query, context, ground_truth):

with tracer.start_as_current_span("agent_evaluation_loop") as parent_span:

# Step 1: Agent processes the query

output = agent.process_query(query, context)

# Step 2: Response is evaluated by NeMo Evaluator

eval_metrics = run_nemo_evaluation(output["response"], ground_truth)

# Step 3: Log evaluation results using OpenTelemetry metrics

hallucination_rate_gauge.set(eval_metrics.get("hallucination_rate", 0.0))

if eval_metrics.get("pii_exposure", False):

pii_exposure_counter.add(1)

# Add evaluation metrics as events to the parent span for rich, contextual traces.

parent_span.add_event("EvaluationComplete", attributes=eval_metrics)

# Step 4: (Optional) Trigger retraining or alerts based on metrics

if eval_metrics["answer_accuracy"] < 0.8:

print("[ALERT] Accuracy has dropped below threshold! Triggering retraining workflow.")

parent_span.set_status(trace.Status(trace.StatusCode.ERROR, "Low Accuracy Detected"))

# --- Run the Example ---

if __name__ == "__main__":

support_agent = MultimodalSupportAgent(model_endpoint="http://model-server/invoke")

# Simulate an incoming user request with multimodal context

user_query = "What is the status of my recent order?"

context_documents = ["order_invoice.pdf", "customer_history.csv"]

ground_truth = {"expected_answer": "Your order #1234 has shipped."}

# Execute the loop

agent_evaluation_loop(support_agent, user_query, context_documents, ground_truth)

# In a real application, the metric reader would run in the background.

# We call it explicitly here to see the output.

metric_reader.collect()Frequently Asked Questions

What does a confidence score mean in retrieval, and how is it different from answer accuracy?

A retrieval confidence score measures whether the retrieved context is sufficient to answer the question, not whether the answer is correct. High confidence means the knowledge base has strong, relevant information. Low confidence means the knowledge base has weak matches. A system can have high retrieval confidence but the model can still hallucinate — that's a model failure. A system can have low retrieval confidence and the model answers anyway — that's a reliability failure.

How do I add retrieval tracing to an existing LangChain pipeline?

Wrap your retriever with explicit logging that captures original and rewritten queries, retrieved documents with relevance scores, and metadata. Add a ranking step that assigns confidence scores. Record the full trace before passing documents to the LLM. For deterministic replay, implement random seeding so the same seed produces the same query rewriting and ranking decisions.

What does non-determinism mean in a RAG context, and why is it a problem?

Non-determinism means asking the same question twice produces different answers or different retrieval chains. It's a problem because it makes debugging impossible — if an answer is wrong and you try to reproduce it, you might get the right answer instead. It also breaks compliance and audit trails. Deterministic replay means the same query always produces the same trace, the same retrieved documents, and the same answer.

How is retrieval observability different from standard RAG monitoring?

Standard RAG monitoring observes the final answer quality — Is it correct? Does it match user feedback? Retrieval observability observes the retrieval layer itself — What was retrieved? Why? With what confidence? You can have 95% accuracy and still have terrible retrieval observability, meaning you can't diagnose why the other 5% failed.

What's the difference between retrieval observability and just logging what documents were retrieved?

Logging tells you what was retrieved. Observability tells you why it was retrieved and how confident the system is in that retrieval. Observability includes the full reasoning trace: query rewrite decisions, relevance scores, ranking logic, and a confidence score indicating whether the retrieved context is likely sufficient for a correct answer. Logging is data; observability is diagnosis.

Recent Posts...

You'll receive the latest insights from the Brainfish blog every other week if you join the Brainfish blog.

.png)