Reduce Time to Value in Slack Without Adding CSM Headcount

Published on

March 3, 2026

Enterprise SaaS onboarding often slows down when critical questions get stuck in Slack threads. This article explains how Brainfish’s Slack integration delivers real-time, context-aware answers, helping Customer Success teams reduce time to value, accelerate onboarding, and improve efficiency without adding CSM headcount.

If you lead Customer Success at a Series B+ B2B SaaS company, you're measured on time to value, onboarding duration, NRR, and cost-to-serve. When onboarding happens in shared Slack channels, delayed answers slow everything down.

This article is for Customer Success Leaders scaling enterprise onboarding across multi-persona, modular products. It explains how Brainfish's Slack integration reduces time to value at the product level—without adding CSM headcount—and what measurable impact to expect in the first 60–90 days.

Why Time to Value Slows Down in Slack

In complex SaaS, onboarding questions are rarely simple. They depend on:

- Enabled modules

- Integration stack

- Account-specific workflows

- User role (admin vs. operator vs. developer)

- Custom implementation details

When onboarding runs through shared Slack channels, common bottlenecks appear:

- CSMs wait on Product or Engineering to clarify edge cases

- Knowledge lives in demos, training videos, and tribal Slack threads

- Internal documentation is outdated or hard to search

- Answers vary depending on who responds

Each delay pushes back the customer's first meaningful value milestone. For companies with modular, service-heavy products and multiple user personas, this friction compounds quickly.

How Brainfish's Slack Integration Reduces Time to Value

Brainfish doesn't replace Slack—it becomes the AI-powered knowledge layer inside it.

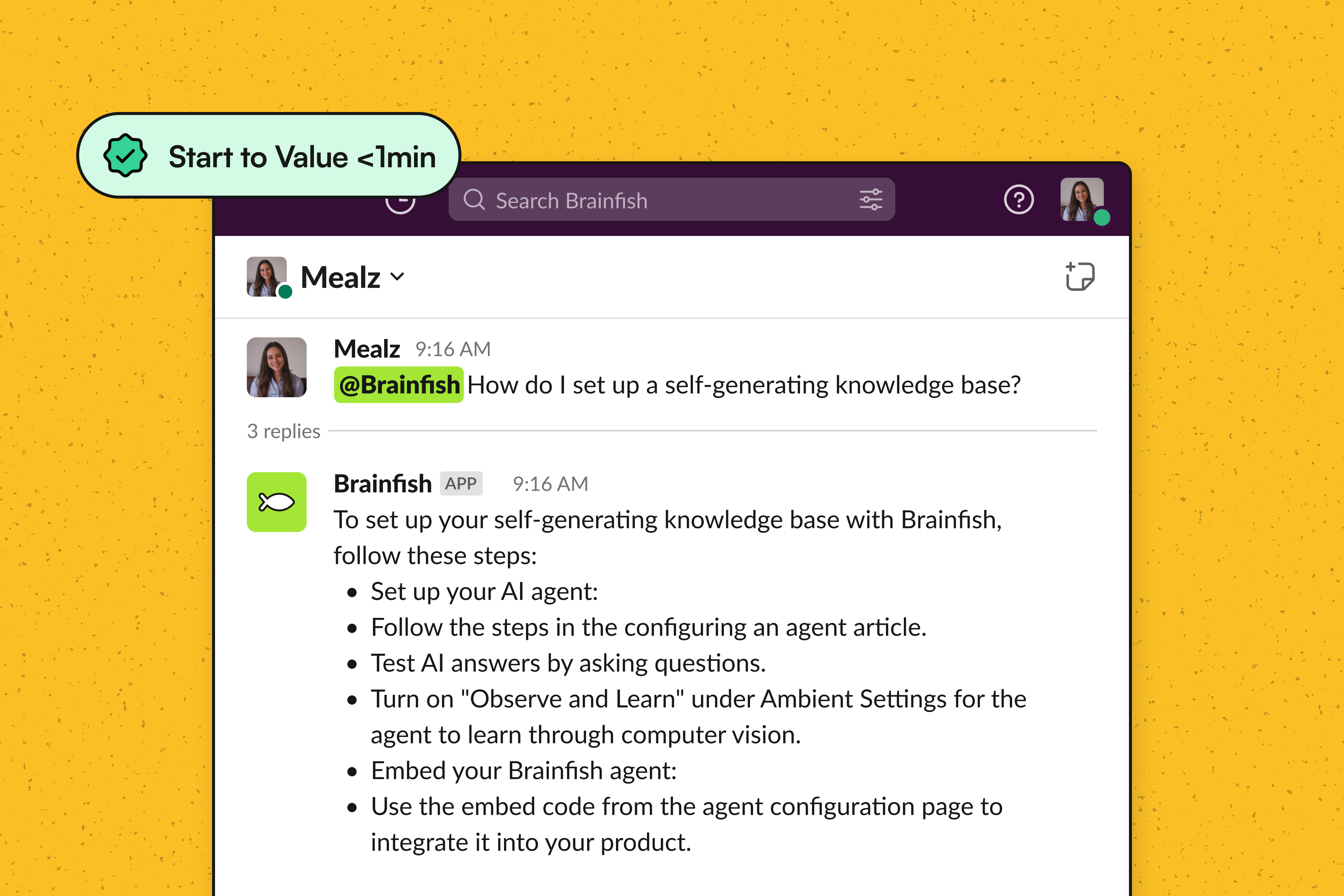

1. Real-Time Answers Inside Slack

Brainfish integrates directly into Slack, letting users ask questions and get answers immediately within existing channels.

Instead of:

- Tagging Product

- Searching Confluence

- Digging through old Slack threads

CSMs and customers get instant responses grounded in verified product knowledge. This cuts response time during onboarding phases where momentum matters most.

2. Converting Video Knowledge into Searchable Answers

In many SaaS companies, critical onboarding knowledge lives in:

- Sales engineer demos

- Product walkthrough recordings

- Customer training sessions

- Internal enablement videos

Brainfish transforms recorded video content into structured, searchable documentation—unlocking knowledge that would otherwise remain trapped in long-form recordings.

When a CSM asks a question in Slack, Brainfish generates an answer based on:

- Existing help center articles

- Internal documentation

- Processed video transcripts

This eliminates the delay where a CSM waits for someone to "remember which demo covered that."

3. Reducing Documentation Debt

Documentation debt slows onboarding. When docs are stale, CSMs revert to:

- Asking Product basic questions

- Sending partial answers

- Escalating unnecessarily

Brainfish automates the generation and updating of documentation from existing content sources. As product workflows evolve, knowledge updates dynamically rather than relying on manual edits.

This reduces dependency on institutional knowledge and lowers the risk of incorrect Slack guidance.

4. Segmenting Answers by Persona and Account

Enterprise onboarding rarely follows one path. Brainfish supports customizable documentation tailored by:

- User role

- Use case

- Enterprise account configuration

- Specific deployment variables

This enables personalized responses at scale. For example:

An admin configuring SSO receives configuration-level guidance. An end user onboarding into a limited module receives user-specific steps.

Segmentation ensures Slack-delivered answers match the customer's context, directly reducing time to value.

Built-In Controls to Prevent Outdated Guidance

Slack answers are only useful if they're accurate. Brainfish reduces knowledge drift through:

- Continuous ingestion of updated documentation

- Processing of new video content and workflows

- Centralized knowledge governance

- An admin interface to review and manage content

This shifts knowledge from static, manually maintained documents to a continuously learning system—reducing the risk that onboarding advice in Slack lags behind product releases.

What Measurable Impact to Expect in 60–90 Days

For Customer Success Leaders, the goal isn't Slack activity—it's faster time to value.

Within the first 60–90 days, customers have observed:

- 20–40% higher self-service success

- Faster Slack response times during onboarding

- Reduced dependency on Product and Engineering for clarifications

- Lower documentation hours spent manually updating onboarding content

- Fewer repetitive internal questions

Leading indicators of improved time to value include:

- Shorter gap between kickoff and first successful configuration milestone

- Fewer Slack threads requiring cross-functional escalation

- Higher confidence among CSMs when answering onboarding questions

Because answers are delivered instantly and grounded in structured knowledge, onboarding momentum increases without increasing headcount.

Why This Matters for Customer Success Leaders

Customer Success Leaders are measured on:

- Time to Value

- Net Revenue Retention

- Cost-to-Serve

- Onboarding efficiency

When Slack becomes a bottleneck, onboarding slows, margins erode, and expansion risk increases.

Brainfish addresses this by:

- Centralizing knowledge

- Making product expertise instantly accessible inside Slack

- Converting scattered content into structured answers

- Reducing reliance on tribal knowledge

The result: a more predictable onboarding motion.

Final Takeaway

To reduce time to value in Slack without adding CSM headcount, you need:

- Real-time, context-aware answers inside Slack

- Automated conversion of existing knowledge into searchable documentation

- Segmented responses by persona and account

- Continuous knowledge updates to prevent drift

When Slack answers become instant and accurate, onboarding accelerates.

To see how this maps to your enterprise onboarding channels, explore how Brainfish integrates into your Slack workflows and measure against your current time-to-value benchmarks.

import time

import requests

from opentelemetry import trace, metrics

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.metrics import MeterProvider

from opentelemetry.sdk.trace.export import ConsoleSpanExporter, SimpleSpanProcessor

from opentelemetry.sdk.metrics.export import ConsoleMetricExporter, PeriodicExportingMetricReader

# --- 1. OpenTelemetry Setup for Observability ---

# Configure exporters to print telemetry data to the console.

# In a production system, these would export to a backend like Prometheus or Jaeger.

trace.set_tracer_provider(TracerProvider())

tracer = trace.get_tracer(__name__)

span_processor = SimpleSpanProcessor(ConsoleSpanExporter())

trace.get_tracer_provider().add_span_processor(span_processor)

metric_reader = PeriodicExportingMetricReader(ConsoleMetricExporter())

metrics.set_meter_provider(MeterProvider(metric_readers=[metric_reader]))

meter = metrics.get_meter(__name__)

# Create custom OpenTelemetry metrics

agent_latency_histogram = meter.create_histogram("agent.latency", unit="ms", description="Agent response time")

agent_invocations_counter = meter.create_counter("agent.invocations", description="Number of times the agent is invoked")

hallucination_rate_gauge = meter.create_gauge("agent.hallucination_rate", unit="percentage", description="Rate of hallucinated responses")

pii_exposure_counter = meter.create_counter("agent.pii_exposure.count", description="Count of responses with PII exposure")

# --- 2. Define the Agent using NeMo Agent Toolkit concepts ---

# The NeMo Agent Toolkit orchestrates agents, tools, and workflows, often via configuration.

# This class simulates an agent that would be managed by the toolkit.

class MultimodalSupportAgent:

def __init__(self, model_endpoint):

self.model_endpoint = model_endpoint

# The toolkit would route incoming requests to this method.

def process_query(self, query, context_data):

# Start an OpenTelemetry span to trace this specific execution.

with tracer.start_as_current_span("agent.process_query") as span:

start_time = time.time()

span.set_attribute("query.text", query)

span.set_attribute("context.data_types", [type(d).__name__ for d in context_data])

# In a real scenario, this would involve complex logic and tool calls.

print(f"\nAgent processing query: '{query}'...")

time.sleep(0.5) # Simulate work (e.g., tool calls, model inference)

agent_response = f"Generated answer for '{query}' based on provided context."

latency = (time.time() - start_time) * 1000

# Record metrics

agent_latency_histogram.record(latency)

agent_invocations_counter.add(1)

span.set_attribute("agent.response", agent_response)

span.set_attribute("agent.latency_ms", latency)

return {"response": agent_response, "latency_ms": latency}

# --- 3. Define the Evaluation Logic using NeMo Evaluator ---

# This function simulates calling the NeMo Evaluator microservice API.

def run_nemo_evaluation(agent_response, ground_truth_data):

with tracer.start_as_current_span("evaluator.run") as span:

print("Submitting response to NeMo Evaluator...")

# In a real system, you would make an HTTP request to the NeMo Evaluator service.

# eval_endpoint = "http://nemo-evaluator-service/v1/evaluate"

# payload = {"response": agent_response, "ground_truth": ground_truth_data}

# response = requests.post(eval_endpoint, json=payload)

# evaluation_results = response.json()

# Mocking the evaluator's response for this example.

time.sleep(0.2) # Simulate network and evaluation latency

mock_results = {

"answer_accuracy": 0.95,

"hallucination_rate": 0.05,

"pii_exposure": False,

"toxicity_score": 0.01,

"latency": 25.5

}

span.set_attribute("eval.results", str(mock_results))

print(f"Evaluation complete: {mock_results}")

return mock_results

# --- 4. The Main Agent Evaluation Loop ---

def agent_evaluation_loop(agent, query, context, ground_truth):

with tracer.start_as_current_span("agent_evaluation_loop") as parent_span:

# Step 1: Agent processes the query

output = agent.process_query(query, context)

# Step 2: Response is evaluated by NeMo Evaluator

eval_metrics = run_nemo_evaluation(output["response"], ground_truth)

# Step 3: Log evaluation results using OpenTelemetry metrics

hallucination_rate_gauge.set(eval_metrics.get("hallucination_rate", 0.0))

if eval_metrics.get("pii_exposure", False):

pii_exposure_counter.add(1)

# Add evaluation metrics as events to the parent span for rich, contextual traces.

parent_span.add_event("EvaluationComplete", attributes=eval_metrics)

# Step 4: (Optional) Trigger retraining or alerts based on metrics

if eval_metrics["answer_accuracy"] < 0.8:

print("[ALERT] Accuracy has dropped below threshold! Triggering retraining workflow.")

parent_span.set_status(trace.Status(trace.StatusCode.ERROR, "Low Accuracy Detected"))

# --- Run the Example ---

if __name__ == "__main__":

support_agent = MultimodalSupportAgent(model_endpoint="http://model-server/invoke")

# Simulate an incoming user request with multimodal context

user_query = "What is the status of my recent order?"

context_documents = ["order_invoice.pdf", "customer_history.csv"]

ground_truth = {"expected_answer": "Your order #1234 has shipped."}

# Execute the loop

agent_evaluation_loop(support_agent, user_query, context_documents, ground_truth)

# In a real application, the metric reader would run in the background.

# We call it explicitly here to see the output.

metric_reader.collect()Frequently Asked Questions

Recent Posts...

You'll receive the latest insights from the Brainfish blog every other week if you join the Brainfish blog.

.png)