Slack AI Agents for Instant Company Knowledge

Published on

March 3, 2026

.png)

Brainfish Slack AI agents answer questions, surface knowledge, and eliminate repetitive interruptions — directly inside your Slack workspace. See how it works.

Your best engineer just got pulled into a 20-minute conversation explaining the deployment process — for the fourth time this week. Your newest hire is sitting in a channel, unsure who to ask about the PTO policy. And somewhere in your Slack workspace, a support agent is scrolling through six months of channel history trying to find a product spec that was shared "sometime last quarter."

This is the reality of how most organizations use Slack today. It's the central hub for collaboration, but the knowledge that flows through it is scattered, siloed, and hard to retrieve when it matters most. Teams lose hours to information retrieval and repetitive questions instead of focusing on the work that actually moves the business forward.

Today, we're launching Brainfish Slack Agents — AI teammates that work directly in your Slack workspace, answering questions and completing tasks using your company's approved knowledge base.

The Problem: Knowledge Is Everywhere, but Nowhere When You Need It

As organizations grow, a predictable pattern emerges. Knowledge gets distributed across channels, documents, wikis, and — most problematically — individual people's heads. The result is a set of compounding problems that slow teams down every single day.

Subject matter experts become bottlenecks. The people who know the most get interrupted the most. Engineers, HR leads, product managers — they spend a disproportionate amount of their day answering the same questions over and over, pulling them away from strategic work.

New hires struggle to ramp up. Onboarding employees face a paradox: they have the most questions but the least context about who to ask or where to look. Many hesitate to ask at all, slowing their time to productivity.

Information is inconsistent. When different people answer the same question, you get different answers. This creates confusion, errors, and eroded trust in internal communications.

Decision-making stalls. Teams can't move forward on projects when they're waiting on someone to surface a piece of information. These micro-delays compound into real project slowdowns.

The common thread is that organizations need a way to make their company knowledge instantly accessible within Slack — where teams already work — with AI that understands their specific context. That's exactly what Slack AI agents deliver.

Introducing Brainfish Slack Agents

Brainfish Slack Agents are AI assistants that live in your Slack workspace, working alongside your team to answer questions, provide information, and complete tasks. They're grounded in your company's approved knowledge base, so responses are accurate and on-brand. Your team gets instant, reliable answers without leaving Slack or waiting for human availability.

Unlike generic AI chatbots or simple scripted Slack bots, Brainfish Slack Agents understand your company's specific content, terminology, and organizational structure. They work as dedicated team members in the channels where your people already collaborate.

How Slack AI Agents Work

Channel-based deployment. Add an agent to any Slack channel — an IT support channel, a sales enablement channel, an onboarding channel — and it becomes a dedicated resource for that team. Different agents can access different knowledge sources, so the engineering channel gets technical documentation while the HR channel gets policy guides.

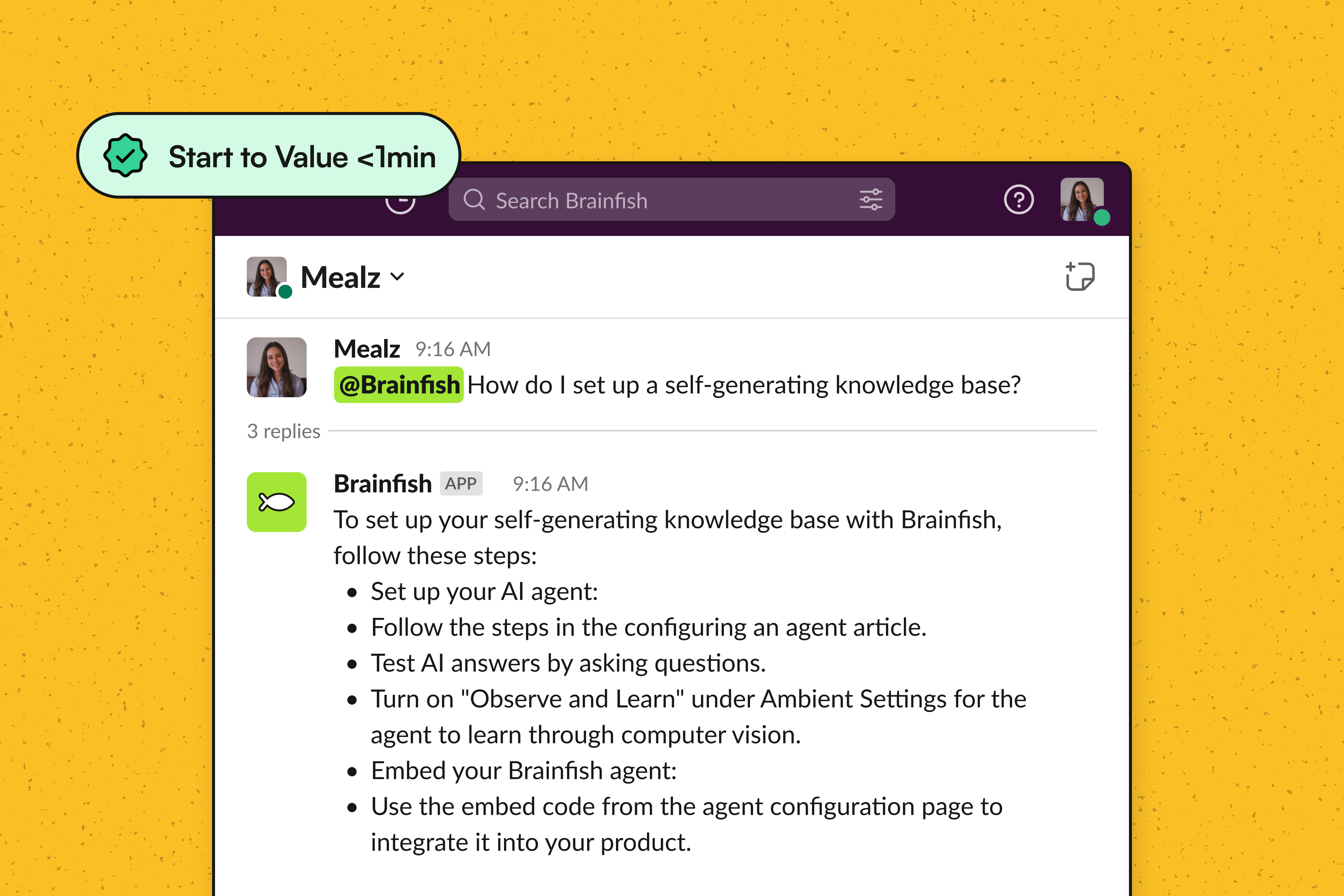

Mention them like a colleague. Tag a Brainfish agent with an @mention, just like you'd tag a teammate. Ask a question in natural language and get an immediate, knowledge-grounded response.

Private messaging. Employees can message agents directly for confidential questions — whether that's a benefits question they'd rather not ask publicly or a quick refresher on a process they've forgotten.

Knowledge base integration. Every response is grounded in your approved company knowledge. This isn't a generic AI making things up. It's an intelligent assistant drawing from the documentation, policies, and resources your organization has already created.

Real-World Use Cases for Slack AI Assistants

The versatility of Brainfish Slack Agents means they fit naturally into workflows across your entire organization.

Internal IT and Employee Support

IT teams field a constant stream of repetitive requests — password resets, VPN setup instructions, software access questions. A Brainfish agent in your IT support channel handles these instantly, freeing up your IT team to focus on complex infrastructure work instead of answering "how do I connect to the VPN" for the hundredth time.

Sales Enablement

Sales teams need fast access to product details, pricing information, and competitive intelligence. With a knowledge bot in your sales channel, reps can pull up the information they need in seconds, right in the middle of deal preparation, without hunting through a CRM or waiting for product marketing to respond.

Onboarding New Employees

The first few weeks at a new job are overwhelming. A Slack onboarding assistant gives new hires a safe, always-available resource to ask questions — from "where do I find the brand guidelines" to "what's the process for requesting time off." This accelerates ramp-up time and reduces the burden on managers and onboarding buddies.

Customer Support Agent Enablement

Support agents working internal channels can query a Brainfish agent for product details, troubleshooting steps, and policy information while actively helping customers. This reduces handle times and improves the consistency of customer-facing responses.

Engineering Documentation Access

Developers can query agents for architecture details, API documentation, deployment procedures, and best practices — all without leaving Slack to dig through a wiki or interrupt a senior engineer.

Why Brainfish Slack Agents Are Different

The market for AI-powered Slack tools is growing, but most options fall into one of two camps: generic AI assistants that aren't grounded in your company's knowledge, or basic Slack bots that are limited to scripted, keyword-triggered responses.

Brainfish Slack Agents occupy a fundamentally different position.

Grounded in your approved knowledge. Generic AI tools like standalone chatbots don't have access to your internal documentation and can produce inaccurate or fabricated answers. Brainfish agents draw exclusively from your knowledge base, so the information they provide is accurate and sanctioned.

Channel-specific customization. You can deploy different agents to different channels, each with access to the knowledge sources relevant to that team. Your engineering agent knows about technical documentation. Your HR agent knows about policies and benefits. This isn't a one-size-fits-all assistant.

Native to the Slack workflow. There's no context-switching, no separate app to open, no new interface to learn. Agents work where your team already works, which means adoption happens naturally.

Natural team integration. Agents behave like team members, not external tools. They respond to mentions, participate in channels, and handle private messages — fitting seamlessly into the way your team already communicates.

How Slack AI Agents Boost Team Productivity

When you remove the friction of information retrieval from your team's daily workflow, the effects are immediate and measurable.

Subject matter experts get their time back. Instead of being interrupted dozens of times a day with questions they've answered before, they can focus on the high-value, strategic work they were hired to do.

New employees reach productivity faster. With instant access to company knowledge from day one, onboarding timelines compress, and new hires feel supported rather than lost.

Information becomes consistent across the organization. When everyone is drawing from the same approved knowledge base, you eliminate the "different people, different answers" problem that creates confusion and errors.

Teams make decisions faster. No more waiting for someone to be available to answer a question. The information is there when it's needed, keeping projects and initiatives moving forward.

Getting Started with Brainfish Slack Agents

Brainfish Slack Agents integrate into your existing Slack workspace with minimal setup. Connect your knowledge sources, deploy agents to the channels where they'll be most valuable, and your team can start asking questions immediately.

There's no complex configuration, no lengthy implementation process, and no new tools for your team to learn. It works within the platform your organization already relies on every day.

If your team is losing time to repetitive questions, knowledge silos, and SME bottlenecks, Brainfish Slack Agents offer a practical path forward — AI that's grounded in your knowledge, integrated into your workflow, and designed to make your team more productive.

Watch a 2min Video Walkthrough

Ready to see it?

Stop losing 40% of your team's day to context-switching. Book a demo or reach out to your CSM.

import time

import requests

from opentelemetry import trace, metrics

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.metrics import MeterProvider

from opentelemetry.sdk.trace.export import ConsoleSpanExporter, SimpleSpanProcessor

from opentelemetry.sdk.metrics.export import ConsoleMetricExporter, PeriodicExportingMetricReader

# --- 1. OpenTelemetry Setup for Observability ---

# Configure exporters to print telemetry data to the console.

# In a production system, these would export to a backend like Prometheus or Jaeger.

trace.set_tracer_provider(TracerProvider())

tracer = trace.get_tracer(__name__)

span_processor = SimpleSpanProcessor(ConsoleSpanExporter())

trace.get_tracer_provider().add_span_processor(span_processor)

metric_reader = PeriodicExportingMetricReader(ConsoleMetricExporter())

metrics.set_meter_provider(MeterProvider(metric_readers=[metric_reader]))

meter = metrics.get_meter(__name__)

# Create custom OpenTelemetry metrics

agent_latency_histogram = meter.create_histogram("agent.latency", unit="ms", description="Agent response time")

agent_invocations_counter = meter.create_counter("agent.invocations", description="Number of times the agent is invoked")

hallucination_rate_gauge = meter.create_gauge("agent.hallucination_rate", unit="percentage", description="Rate of hallucinated responses")

pii_exposure_counter = meter.create_counter("agent.pii_exposure.count", description="Count of responses with PII exposure")

# --- 2. Define the Agent using NeMo Agent Toolkit concepts ---

# The NeMo Agent Toolkit orchestrates agents, tools, and workflows, often via configuration.

# This class simulates an agent that would be managed by the toolkit.

class MultimodalSupportAgent:

def __init__(self, model_endpoint):

self.model_endpoint = model_endpoint

# The toolkit would route incoming requests to this method.

def process_query(self, query, context_data):

# Start an OpenTelemetry span to trace this specific execution.

with tracer.start_as_current_span("agent.process_query") as span:

start_time = time.time()

span.set_attribute("query.text", query)

span.set_attribute("context.data_types", [type(d).__name__ for d in context_data])

# In a real scenario, this would involve complex logic and tool calls.

print(f"\nAgent processing query: '{query}'...")

time.sleep(0.5) # Simulate work (e.g., tool calls, model inference)

agent_response = f"Generated answer for '{query}' based on provided context."

latency = (time.time() - start_time) * 1000

# Record metrics

agent_latency_histogram.record(latency)

agent_invocations_counter.add(1)

span.set_attribute("agent.response", agent_response)

span.set_attribute("agent.latency_ms", latency)

return {"response": agent_response, "latency_ms": latency}

# --- 3. Define the Evaluation Logic using NeMo Evaluator ---

# This function simulates calling the NeMo Evaluator microservice API.

def run_nemo_evaluation(agent_response, ground_truth_data):

with tracer.start_as_current_span("evaluator.run") as span:

print("Submitting response to NeMo Evaluator...")

# In a real system, you would make an HTTP request to the NeMo Evaluator service.

# eval_endpoint = "http://nemo-evaluator-service/v1/evaluate"

# payload = {"response": agent_response, "ground_truth": ground_truth_data}

# response = requests.post(eval_endpoint, json=payload)

# evaluation_results = response.json()

# Mocking the evaluator's response for this example.

time.sleep(0.2) # Simulate network and evaluation latency

mock_results = {

"answer_accuracy": 0.95,

"hallucination_rate": 0.05,

"pii_exposure": False,

"toxicity_score": 0.01,

"latency": 25.5

}

span.set_attribute("eval.results", str(mock_results))

print(f"Evaluation complete: {mock_results}")

return mock_results

# --- 4. The Main Agent Evaluation Loop ---

def agent_evaluation_loop(agent, query, context, ground_truth):

with tracer.start_as_current_span("agent_evaluation_loop") as parent_span:

# Step 1: Agent processes the query

output = agent.process_query(query, context)

# Step 2: Response is evaluated by NeMo Evaluator

eval_metrics = run_nemo_evaluation(output["response"], ground_truth)

# Step 3: Log evaluation results using OpenTelemetry metrics

hallucination_rate_gauge.set(eval_metrics.get("hallucination_rate", 0.0))

if eval_metrics.get("pii_exposure", False):

pii_exposure_counter.add(1)

# Add evaluation metrics as events to the parent span for rich, contextual traces.

parent_span.add_event("EvaluationComplete", attributes=eval_metrics)

# Step 4: (Optional) Trigger retraining or alerts based on metrics

if eval_metrics["answer_accuracy"] < 0.8:

print("[ALERT] Accuracy has dropped below threshold! Triggering retraining workflow.")

parent_span.set_status(trace.Status(trace.StatusCode.ERROR, "Low Accuracy Detected"))

# --- Run the Example ---

if __name__ == "__main__":

support_agent = MultimodalSupportAgent(model_endpoint="http://model-server/invoke")

# Simulate an incoming user request with multimodal context

user_query = "What is the status of my recent order?"

context_documents = ["order_invoice.pdf", "customer_history.csv"]

ground_truth = {"expected_answer": "Your order #1234 has shipped."}

# Execute the loop

agent_evaluation_loop(support_agent, user_query, context_documents, ground_truth)

# In a real application, the metric reader would run in the background.

# We call it explicitly here to see the output.

metric_reader.collect()Frequently Asked Questions

Recent Posts...

You'll receive the latest insights from the Brainfish blog every other week if you join the Brainfish blog.