AI Knowledge Base vs. Chatbot: Which Does Your Support Team Actually Need?

Published on

March 30, 2026

AI Knowledge Base vs. Chatbot: Which Does Your Support Team Actually Need?

New to this topic? Start with our complete guide to AI knowledge bases.

"Should we get a chatbot or a knowledge base?" is usually the wrong question. The right question is: where does your support problem actually live?

Because a chatbot and an AI knowledge base solve different problems — and buying the wrong one (or expecting the wrong one to do the other's job) is one of the most common support tech mistakes teams make.

What's the Difference?

AI Chatbot

An AI chatbot is a conversational interface. Customers type questions; the bot responds in natural language. The bot may answer directly, ask clarifying questions, escalate to a human, or hand off to a workflow.

The chatbot is the front end of the interaction — the conversation layer. What it knows comes from whatever knowledge source is connected to it.

Examples: Intercom Fin, Zendesk AI Agent, Freshdesk Freddy, custom GPT-based agents.

AI Knowledge Base

An AI knowledge base is a retrieval system for structured knowledge. It stores, organizes, validates, and serves information — both to humans (in a help center or search interface) and to AI agents (via API or integration).

The knowledge base is the back end — the source of truth layer. It determines what answers are available and how accurate they are.

Examples: Brainfish, Guru, Document360, traditional help centers with AI search.

The Key Insight: They're Not Alternatives — They're Layers

Here's what matters: a chatbot without a good knowledge base gives bad answers. A knowledge base without a conversational interface requires customers to search rather than ask.

Most teams think they're choosing between two options. In reality:

- A chatbot is how customers interact

- A knowledge base is where the answers come from

You need both. The question is which you're missing — or which is the current bottleneck.

When You Need a Better Chatbot

You need a better chatbot when:

Your problem is conversation quality. Customers are abandoning chat interactions because the responses are robotic, context-free, or don't handle escalation well. The issue is the conversation layer, not the knowledge.

You're missing a conversational channel entirely. You have a help center, but customers want to ask questions in a chat interface. The bottleneck is access, not knowledge accuracy.

Routing and escalation are breaking. The current bot can't figure out when to hand off to a human, or the handoff loses context. That's a conversation layer problem.

You need to cover multiple channels. Email, chat, in-product, mobile — a chatbot layer is what unifies the customer interaction across channels.

When You Need a Better Knowledge Base

You need a better knowledge base when:

Your AI is giving wrong answers. The chatbot is working, but the answers it gives are inaccurate, outdated, or incomplete. This is almost always a knowledge layer problem, not a chatbot problem.

Your deflection rates have plateaued. You've optimized the conversational flow, but the percentage of questions the AI can actually resolve isn't improving. The bottleneck is in retrieval accuracy, not conversation design.

Knowledge is getting stale. Your product ships fast and your documentation can't keep up. The AI is confidently referencing old workflows. That's a knowledge freshness problem.

Different AI agents give different answers. Your help center AI, your support chatbot, and your agent assist tool are all pulling from different sources and giving inconsistent answers. A unified knowledge layer solves this.

You can't see which questions aren't being answered. You know your deflection rate, but not which specific questions are failing. A knowledge base with proper analytics surfaces this.

The Failure Pattern

The most common failure mode: teams buy a chatbot expecting it to be smart, and it isn't — because the knowledge behind it is poorly structured, stale, or insufficient.

The intuitive fix is to try a different chatbot. The correct fix is usually to improve the knowledge layer.

Chatbots are largely commoditized at the conversation layer — the major platforms (Intercom, Zendesk, Freshdesk) all produce coherent, contextual responses. What differentiates them is retrieval quality, which is a function of what knowledge they're drawing from.

A better chatbot on bad knowledge is still a bad experience. The same chatbot on well-structured, validated knowledge performs dramatically better.

The Right Architecture

For most SaaS support teams, the right architecture is:

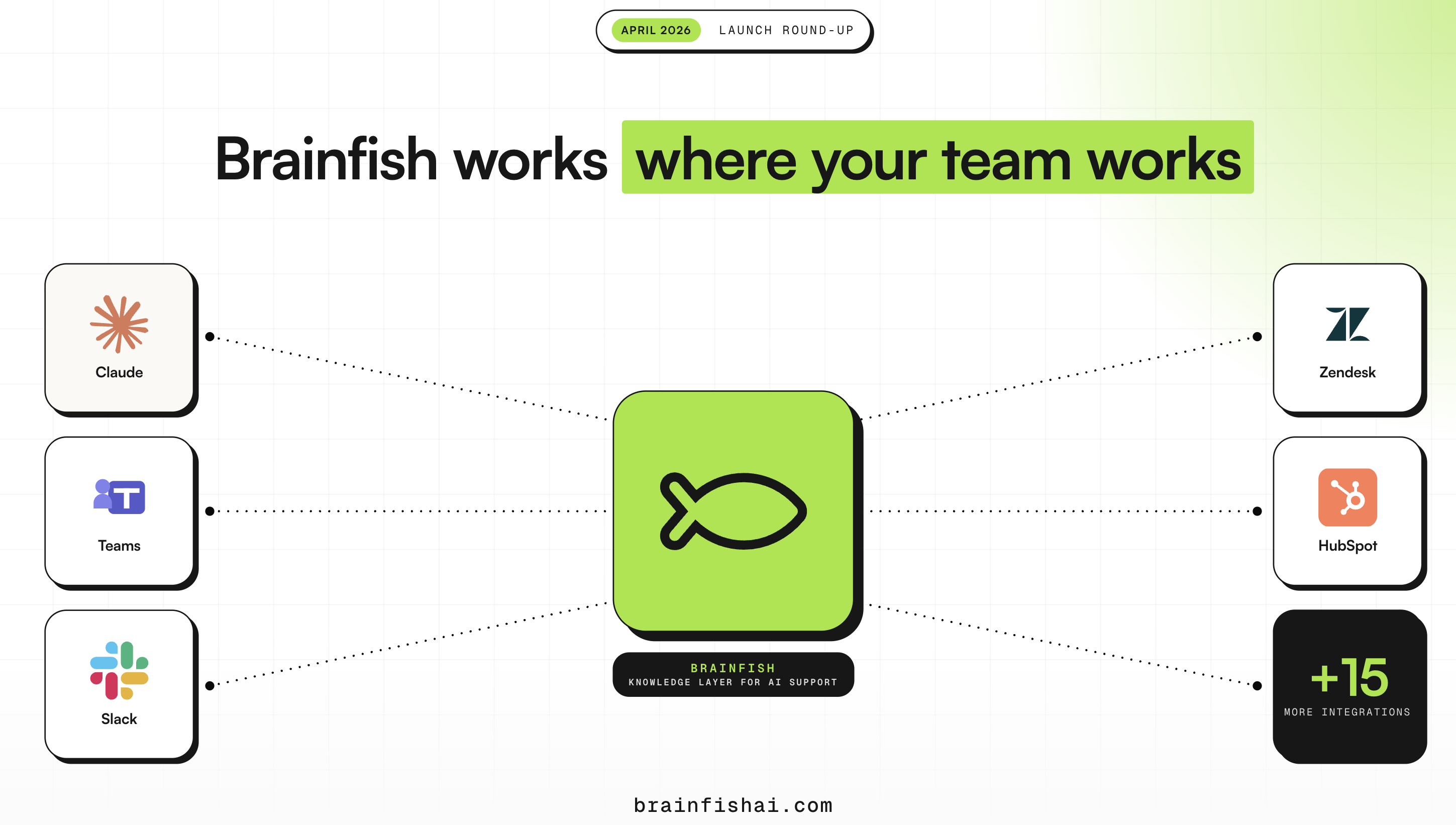

Layer 1 — Knowledge base (Brainfish): Structured, validated, current knowledge. Powers both human-facing self-service (help center, in-product widget) and AI agents (via API). Proactively surfaces freshness risks. Provides analytics on what's not getting answered.

Layer 2 — Chatbot (Intercom, Zendesk, or custom): Conversation interface that queries the knowledge layer. Handles escalation, routing, and multi-turn conversations. Draws its answers from the structured knowledge layer rather than raw documents.

Brainfish + your existing chatbot is a common pattern: you keep the conversation interface you've already built (or that your team is familiar with), and you upgrade the knowledge layer that powers it.

Making the Decision

Ask yourself:

Are our AI answers accurate? If no → knowledge base problem.

Are we getting meaningful deflection at all? If no, with good content → conversation layer problem.

Are customers abandoning the AI experience? If yes → likely a combination of both.

Is our documentation keeping up with the product? If no → knowledge freshness problem.

Most teams find the knowledge layer is the binding constraint. That's where to invest first.

The Bottom Line

A chatbot is the interface. A knowledge base is the source of truth. You need both, but if you can only fix one thing first, fix the thing that's causing your AI to give wrong answers — because no amount of conversational polish compensates for inaccurate retrieval.

For most SaaS support teams in 2026, the knowledge layer is the bottleneck. That's Brainfish's problem to solve.

See what happens when AI support is built on a knowledge layer that actually works. Book a demo →

import time

import requests

from opentelemetry import trace, metrics

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.metrics import MeterProvider

from opentelemetry.sdk.trace.export import ConsoleSpanExporter, SimpleSpanProcessor

from opentelemetry.sdk.metrics.export import ConsoleMetricExporter, PeriodicExportingMetricReader

# --- 1. OpenTelemetry Setup for Observability ---

# Configure exporters to print telemetry data to the console.

# In a production system, these would export to a backend like Prometheus or Jaeger.

trace.set_tracer_provider(TracerProvider())

tracer = trace.get_tracer(__name__)

span_processor = SimpleSpanProcessor(ConsoleSpanExporter())

trace.get_tracer_provider().add_span_processor(span_processor)

metric_reader = PeriodicExportingMetricReader(ConsoleMetricExporter())

metrics.set_meter_provider(MeterProvider(metric_readers=[metric_reader]))

meter = metrics.get_meter(__name__)

# Create custom OpenTelemetry metrics

agent_latency_histogram = meter.create_histogram("agent.latency", unit="ms", description="Agent response time")

agent_invocations_counter = meter.create_counter("agent.invocations", description="Number of times the agent is invoked")

hallucination_rate_gauge = meter.create_gauge("agent.hallucination_rate", unit="percentage", description="Rate of hallucinated responses")

pii_exposure_counter = meter.create_counter("agent.pii_exposure.count", description="Count of responses with PII exposure")

# --- 2. Define the Agent using NeMo Agent Toolkit concepts ---

# The NeMo Agent Toolkit orchestrates agents, tools, and workflows, often via configuration.

# This class simulates an agent that would be managed by the toolkit.

class MultimodalSupportAgent:

def __init__(self, model_endpoint):

self.model_endpoint = model_endpoint

# The toolkit would route incoming requests to this method.

def process_query(self, query, context_data):

# Start an OpenTelemetry span to trace this specific execution.

with tracer.start_as_current_span("agent.process_query") as span:

start_time = time.time()

span.set_attribute("query.text", query)

span.set_attribute("context.data_types", [type(d).__name__ for d in context_data])

# In a real scenario, this would involve complex logic and tool calls.

print(f"\nAgent processing query: '{query}'...")

time.sleep(0.5) # Simulate work (e.g., tool calls, model inference)

agent_response = f"Generated answer for '{query}' based on provided context."

latency = (time.time() - start_time) * 1000

# Record metrics

agent_latency_histogram.record(latency)

agent_invocations_counter.add(1)

span.set_attribute("agent.response", agent_response)

span.set_attribute("agent.latency_ms", latency)

return {"response": agent_response, "latency_ms": latency}

# --- 3. Define the Evaluation Logic using NeMo Evaluator ---

# This function simulates calling the NeMo Evaluator microservice API.

def run_nemo_evaluation(agent_response, ground_truth_data):

with tracer.start_as_current_span("evaluator.run") as span:

print("Submitting response to NeMo Evaluator...")

# In a real system, you would make an HTTP request to the NeMo Evaluator service.

# eval_endpoint = "http://nemo-evaluator-service/v1/evaluate"

# payload = {"response": agent_response, "ground_truth": ground_truth_data}

# response = requests.post(eval_endpoint, json=payload)

# evaluation_results = response.json()

# Mocking the evaluator's response for this example.

time.sleep(0.2) # Simulate network and evaluation latency

mock_results = {

"answer_accuracy": 0.95,

"hallucination_rate": 0.05,

"pii_exposure": False,

"toxicity_score": 0.01,

"latency": 25.5

}

span.set_attribute("eval.results", str(mock_results))

print(f"Evaluation complete: {mock_results}")

return mock_results

# --- 4. The Main Agent Evaluation Loop ---

def agent_evaluation_loop(agent, query, context, ground_truth):

with tracer.start_as_current_span("agent_evaluation_loop") as parent_span:

# Step 1: Agent processes the query

output = agent.process_query(query, context)

# Step 2: Response is evaluated by NeMo Evaluator

eval_metrics = run_nemo_evaluation(output["response"], ground_truth)

# Step 3: Log evaluation results using OpenTelemetry metrics

hallucination_rate_gauge.set(eval_metrics.get("hallucination_rate", 0.0))

if eval_metrics.get("pii_exposure", False):

pii_exposure_counter.add(1)

# Add evaluation metrics as events to the parent span for rich, contextual traces.

parent_span.add_event("EvaluationComplete", attributes=eval_metrics)

# Step 4: (Optional) Trigger retraining or alerts based on metrics

if eval_metrics["answer_accuracy"] < 0.8:

print("[ALERT] Accuracy has dropped below threshold! Triggering retraining workflow.")

parent_span.set_status(trace.Status(trace.StatusCode.ERROR, "Low Accuracy Detected"))

# --- Run the Example ---

if __name__ == "__main__":

support_agent = MultimodalSupportAgent(model_endpoint="http://model-server/invoke")

# Simulate an incoming user request with multimodal context

user_query = "What is the status of my recent order?"

context_documents = ["order_invoice.pdf", "customer_history.csv"]

ground_truth = {"expected_answer": "Your order #1234 has shipped."}

# Execute the loop

agent_evaluation_loop(support_agent, user_query, context_documents, ground_truth)

# In a real application, the metric reader would run in the background.

# We call it explicitly here to see the output.

metric_reader.collect()Frequently Asked Questions

Recent Posts...

You'll receive the latest insights from the Brainfish blog every other week if you join the Brainfish blog.

.png)