Why 'We Need More Training' Is Killing Your Sales Performance — And How AI Enablement Fixes the Real Problem

Published on

March 26, 2026

*The modern enablement engine isn't about producing more content. It's about delivering trusted knowledge at the exact moment it matters.* Sales leaders say it constantly: *"We need more training."* It's the go-to response when pipeline misses, demos bomb, reps struggle with basic objections. It feels controllable. You can schedule it, measure it, point to it in a QBR.

What is AI enablement? AI enablement is the use of artificial intelligence to surface the right knowledge to the right person at the exact moment they need it — replacing static playbooks and reactive training programs with dynamic, real-time knowledge delivery.

The modern enablement engine isn't about producing more content. It's about delivering trusted knowledge at the exact moment it matters.

Sales leaders say it constantly: "We need more training." It's the go-to response when pipeline misses, demos bomb, reps struggle with basic objections. It feels controllable. You can schedule it, measure it, point to it in a QBR.

But what if "we need more training" is the wrong diagnosis entirely?

That was the central insight from a recent conversation between sales enablement leaders Roz Greenfield (Level213), Allie Kaiser, and Dani Wilson — a session that reframed how modern go-to-market teams should think about AI enablement, knowledge infrastructure, and what it actually takes to make reps effective in the moment that matters most: when they're in front of a buyer.

The Real Problem Isn't Training. It's Knowledge Access.

When a rep bombs a demo, misses pipeline, or can't handle a basic objection, the instinct is to add more training. But as Allie explained in the session, the real diagnosis is not a training problem — it's a knowledge access problem. The information does exist. The rep just typically can't find it, or couldn't recall it in the moment that it mattered.

More training is a symptom response. The actual disease is that knowledge isn't accessible in the moment of need.

This distinction is critical for anyone building or scaling an AI enablement strategy. You can pour resources into LMS platforms, onboarding programs, and quarterly training sessions — and still watch adoption lag. Because the rep isn't failing to learn. They're failing to find and apply the right knowledge at the right time.

Key insight: : When someone asks "why isn't sales training working," the answer is almost always a knowledge access and delivery problem — not a content volume problem.

Why Enablement Teams Produce More Content Than Ever — But Adoption Still Lags

Here's a paradox every enablement professional knows intimately: content output has never been higher, yet rep adoption keeps declining.

The reason? Most content is created for the wrong moment.

Subject matter experts write documentation through the lens of their own expertise, not through the lens of how a rep will actually use it in a live call. Product teams push out release docs written for engineers, not AEs. Enablement teams respond to every content request without asking: is this the right thing to produce? For whom? When will they use it?

The result is fragmentation — dozens of versions of similar content scattered across Google Drive, Notion, Slack, LMS platforms, and shared drives, none of it surfaced at the moment a rep actually needs it.

Reps learn to stop trusting official enablement materials. Top performers build their own "personal truth files" — private Notion docs, saved Slack messages, handwritten notes. Tribal knowledge that disappears the moment they leave the company.

Content Decay: The Silent Performance Killer in Sales Enablement

What is content decay in sales enablement? Content decay is the process by which enablement materials become outdated, inaccurate, or irrelevant over time — eroding rep confidence and buyer trust without anyone realizing it until the damage is done.

Reps get corrected by prospects on pricing that's 6 months out of date, or they reference old features that no longer exist. The damage isn't just the wrong information — it's the trust erosion that follows.

A rep learns a battle card. They use it. The prospect corrects them. Now that rep starts hedging — wondering if everything else is wrong too. They stop relying on enablement materials altogether and go back to DMing their favorite solution consultant on Slack.

When Slack DMs become the primary knowledge-sharing channel, you have a findability problem, a trust problem, and an AI enablement opportunity.

Why Enablement Teams Are Being Tapped to Lead AI Adoption

Across organizations, enablement teams are increasingly being asked to evaluate and lead internal AI adoption — and it makes sense.

Enablement's core skill is taking complex information and making it usable. Thinking about how people actually do their jobs. Designing resources for the person, not just the topic. That's exactly what successful AI adoption requires.

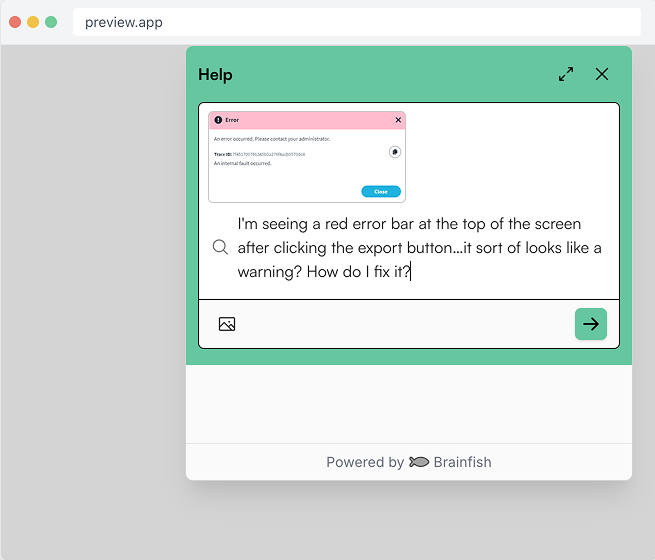

The shift AI enables is profound: from static playbooks to dynamic, just-in-time knowledge delivery. Instead of a rep opening five tabs to prep for a call, they ask a question in natural language and get an accurate, up-to-date answer sourced from your actual documentation — in seconds.

Why enablement is uniquely positioned to lead AI adoption:

- Enablement already owns the knowledge layer

- Enablement thinks about adoption, not just output

- Enablement understands the end user (the rep) better than anyone

- Enablement is the change management function GTM teams already trust

From Static Playbooks to Dynamic, Just-in-Time AI Enablement

What does just-in-time AI enablement actually look like?

The practical reality for most sales orgs today: a new rep gets handed a 150-page onboarding document and told to "go search it." That's not enablement. That's documentation.

Just-in-time AI enablement flips the model from push to pull:

- Old model (push): Reps are expected to read, memorize, and recall everything during onboarding — then left to fend for themselves on live calls

- New model (pull): Reps ask a question in natural language at the exact moment they need it, and get an accurate, verified answer surfaced from current documentation

This is where AI stops being a buzzword and starts being a genuine productivity lever. The test is simple: Does it make my rep faster? More confident? More accurate in front of the buyer? If yes, it earns its place in the stack.

The Knowledge Infrastructure Problem — And How AI Solves It

What is sales knowledge infrastructure? Knowledge infrastructure is the system that determines how sales knowledge is created, stored, maintained, and surfaced to reps. Most organizations have fragmented knowledge infrastructure — information scattered across Slack, Notion, call recordings, LMS platforms, and product docs with no intelligent layer connecting them.

The fragmentation isn't just inconvenient. It's operationally expensive.

Every minute a rep spends searching for content is a minute they're not generating revenue. Every piece of outdated information they use in a live conversation is a trust withdrawal with a buyer.

AI enablement platforms that aggregate, organize, and intelligently surface knowledge solve both problems simultaneously:

- Accuracy: Content is automatically flagged when it may be outdated, with suggested edits surfaced to enablement teams before a rep ever uses stale information

- Findability: Reps ask questions in natural language and get answers sourced from verified, up-to-date documentation — without knowing where that documentation lives

- Coverage: The few-to-many problem that has always defined enablement gets addressed. One AI layer serves hundreds of reps with personalized, contextual answers

The Future: Personalized AI Coaching at Scale

How does AI coaching work in sales? AI coaching uses machine learning to analyze call recordings, rep behavior, and knowledge gaps in real time — delivering personalized feedback, objection handling practice, and talk track reinforcement at the moment it's most relevant.

The vision: every rep has access to a personalized AI coach. After every call, instead of waiting weeks for a manager to find time for a debrief, the rep gets a 3–5 minute AI-powered debrief while the call is still fresh.

But — and this is the critical caveat — AI coaching is only as good as the underlying knowledge layer. If the battle cards are wrong, the pricing is outdated, or the product positioning doesn't reflect the latest release, the coaching feedback will be built on a broken foundation.

AI enablement and AI coaching are not separate investments. They're the same investment. Get the knowledge infrastructure right first, and everything downstream improves.

What This Means for Enablement Teams Right Now

Three shifts worth making immediately:

1. Stop measuring enablement by content volume.

Volume is not the play. Quality, recency, and accuracy are the play. If you're measuring success by the number of assets produced, you're optimizing for the wrong thing.

2. Treat Slack DMs as a signal.

When reps are DMing colleagues for answers instead of using official docs, that's data. Map the most common questions — they're telling you exactly where your knowledge infrastructure is failing.

3. Invest in the knowledge layer before the AI tools.

The best AI enablement tools in the world can't compensate for stale, fragmented, or inaccessible documentation. Foundation first.

Frequently Asked Questions About AI Enablement

What is the difference between sales training and AI enablement?

Sales training is a scheduled, one-time or periodic knowledge transfer event. AI enablement is an always-on system that surfaces relevant knowledge to reps at the exact moment they need it — during call prep, on live calls, or in post-call review.

Why is AI important for sales enablement?

AI solves the two biggest failure modes in traditional enablement: content decay and findability. AI can automatically detect when content may be outdated, suggest updates, and surface the right information to the right rep at the right moment — without requiring the rep to search for it.

How does AI enablement improve sales rep productivity?

By reducing the time reps spend searching for information, eliminating the use of outdated content in buyer conversations, and surfacing personalized knowledge at the moment of need — AI enablement lets reps spend more time doing what they're paid to do: sell.

What is knowledge decay in sales?

Knowledge decay (or content decay) is the gradual process by which sales content becomes inaccurate or outdated. It's a silent performance killer because reps often don't know they're using stale information until a prospect corrects them — eroding both the deal and the rep's confidence.

The Bottom Line

The modern enablement engine isn't about producing more content. It's about delivering trusted knowledge at the exact moment it matters — in a format the rep can actually use, from a source they actually trust, in the time they have available.

AI makes that possible in a way it never was before. But only if enablement teams lead the charge.

Because the problem was never that reps couldn't learn. The problem was that knowledge wasn't there when they needed it.

Want to see how Brainfish helps enablement teams build the knowledge infrastructure that makes AI work? Learn more →

import time

import requests

from opentelemetry import trace, metrics

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.metrics import MeterProvider

from opentelemetry.sdk.trace.export import ConsoleSpanExporter, SimpleSpanProcessor

from opentelemetry.sdk.metrics.export import ConsoleMetricExporter, PeriodicExportingMetricReader

# --- 1. OpenTelemetry Setup for Observability ---

# Configure exporters to print telemetry data to the console.

# In a production system, these would export to a backend like Prometheus or Jaeger.

trace.set_tracer_provider(TracerProvider())

tracer = trace.get_tracer(__name__)

span_processor = SimpleSpanProcessor(ConsoleSpanExporter())

trace.get_tracer_provider().add_span_processor(span_processor)

metric_reader = PeriodicExportingMetricReader(ConsoleMetricExporter())

metrics.set_meter_provider(MeterProvider(metric_readers=[metric_reader]))

meter = metrics.get_meter(__name__)

# Create custom OpenTelemetry metrics

agent_latency_histogram = meter.create_histogram("agent.latency", unit="ms", description="Agent response time")

agent_invocations_counter = meter.create_counter("agent.invocations", description="Number of times the agent is invoked")

hallucination_rate_gauge = meter.create_gauge("agent.hallucination_rate", unit="percentage", description="Rate of hallucinated responses")

pii_exposure_counter = meter.create_counter("agent.pii_exposure.count", description="Count of responses with PII exposure")

# --- 2. Define the Agent using NeMo Agent Toolkit concepts ---

# The NeMo Agent Toolkit orchestrates agents, tools, and workflows, often via configuration.

# This class simulates an agent that would be managed by the toolkit.

class MultimodalSupportAgent:

def __init__(self, model_endpoint):

self.model_endpoint = model_endpoint

# The toolkit would route incoming requests to this method.

def process_query(self, query, context_data):

# Start an OpenTelemetry span to trace this specific execution.

with tracer.start_as_current_span("agent.process_query") as span:

start_time = time.time()

span.set_attribute("query.text", query)

span.set_attribute("context.data_types", [type(d).__name__ for d in context_data])

# In a real scenario, this would involve complex logic and tool calls.

print(f"\nAgent processing query: '{query}'...")

time.sleep(0.5) # Simulate work (e.g., tool calls, model inference)

agent_response = f"Generated answer for '{query}' based on provided context."

latency = (time.time() - start_time) * 1000

# Record metrics

agent_latency_histogram.record(latency)

agent_invocations_counter.add(1)

span.set_attribute("agent.response", agent_response)

span.set_attribute("agent.latency_ms", latency)

return {"response": agent_response, "latency_ms": latency}

# --- 3. Define the Evaluation Logic using NeMo Evaluator ---

# This function simulates calling the NeMo Evaluator microservice API.

def run_nemo_evaluation(agent_response, ground_truth_data):

with tracer.start_as_current_span("evaluator.run") as span:

print("Submitting response to NeMo Evaluator...")

# In a real system, you would make an HTTP request to the NeMo Evaluator service.

# eval_endpoint = "http://nemo-evaluator-service/v1/evaluate"

# payload = {"response": agent_response, "ground_truth": ground_truth_data}

# response = requests.post(eval_endpoint, json=payload)

# evaluation_results = response.json()

# Mocking the evaluator's response for this example.

time.sleep(0.2) # Simulate network and evaluation latency

mock_results = {

"answer_accuracy": 0.95,

"hallucination_rate": 0.05,

"pii_exposure": False,

"toxicity_score": 0.01,

"latency": 25.5

}

span.set_attribute("eval.results", str(mock_results))

print(f"Evaluation complete: {mock_results}")

return mock_results

# --- 4. The Main Agent Evaluation Loop ---

def agent_evaluation_loop(agent, query, context, ground_truth):

with tracer.start_as_current_span("agent_evaluation_loop") as parent_span:

# Step 1: Agent processes the query

output = agent.process_query(query, context)

# Step 2: Response is evaluated by NeMo Evaluator

eval_metrics = run_nemo_evaluation(output["response"], ground_truth)

# Step 3: Log evaluation results using OpenTelemetry metrics

hallucination_rate_gauge.set(eval_metrics.get("hallucination_rate", 0.0))

if eval_metrics.get("pii_exposure", False):

pii_exposure_counter.add(1)

# Add evaluation metrics as events to the parent span for rich, contextual traces.

parent_span.add_event("EvaluationComplete", attributes=eval_metrics)

# Step 4: (Optional) Trigger retraining or alerts based on metrics

if eval_metrics["answer_accuracy"] < 0.8:

print("[ALERT] Accuracy has dropped below threshold! Triggering retraining workflow.")

parent_span.set_status(trace.Status(trace.StatusCode.ERROR, "Low Accuracy Detected"))

# --- Run the Example ---

if __name__ == "__main__":

support_agent = MultimodalSupportAgent(model_endpoint="http://model-server/invoke")

# Simulate an incoming user request with multimodal context

user_query = "What is the status of my recent order?"

context_documents = ["order_invoice.pdf", "customer_history.csv"]

ground_truth = {"expected_answer": "Your order #1234 has shipped."}

# Execute the loop

agent_evaluation_loop(support_agent, user_query, context_documents, ground_truth)

# In a real application, the metric reader would run in the background.

# We call it explicitly here to see the output.

metric_reader.collect()Frequently Asked Questions

Recent Posts...

You'll receive the latest insights from the Brainfish blog every other week if you join the Brainfish blog.